I'm watching companies negatively affect their business by rushing to publish AI-generated content. They're chasing mentions in ChatGPT and other AI search engines, but they're actually hurting themselves in ways they don't realize.

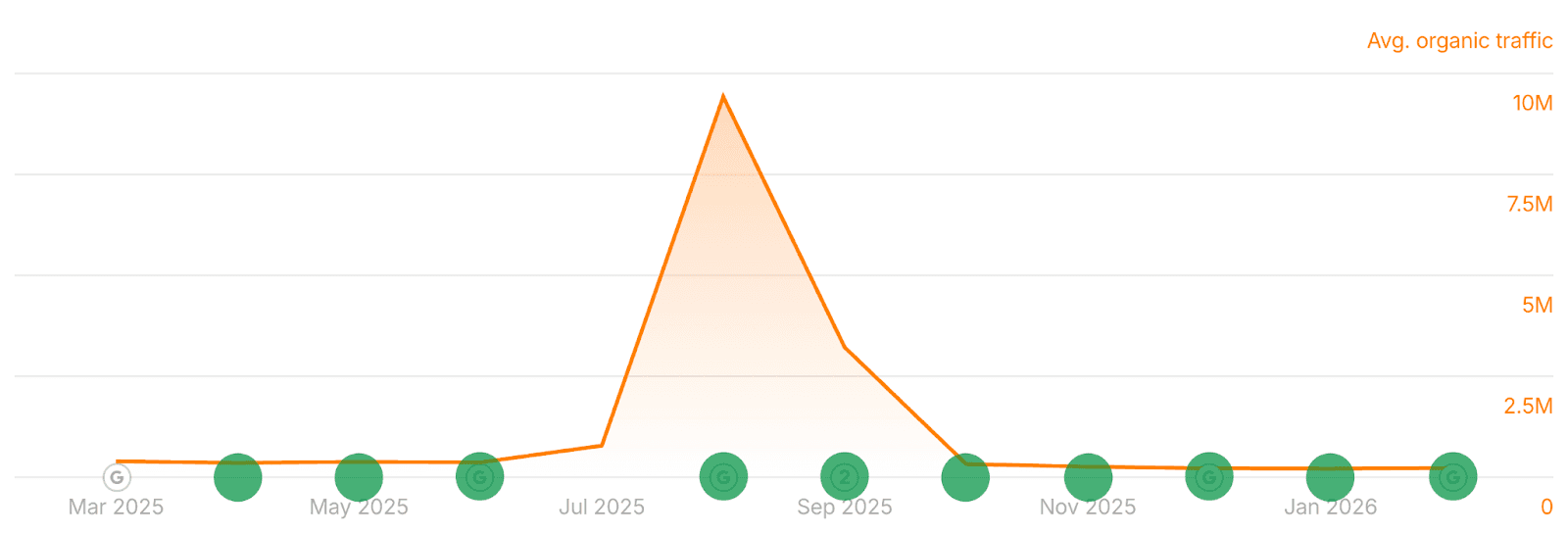

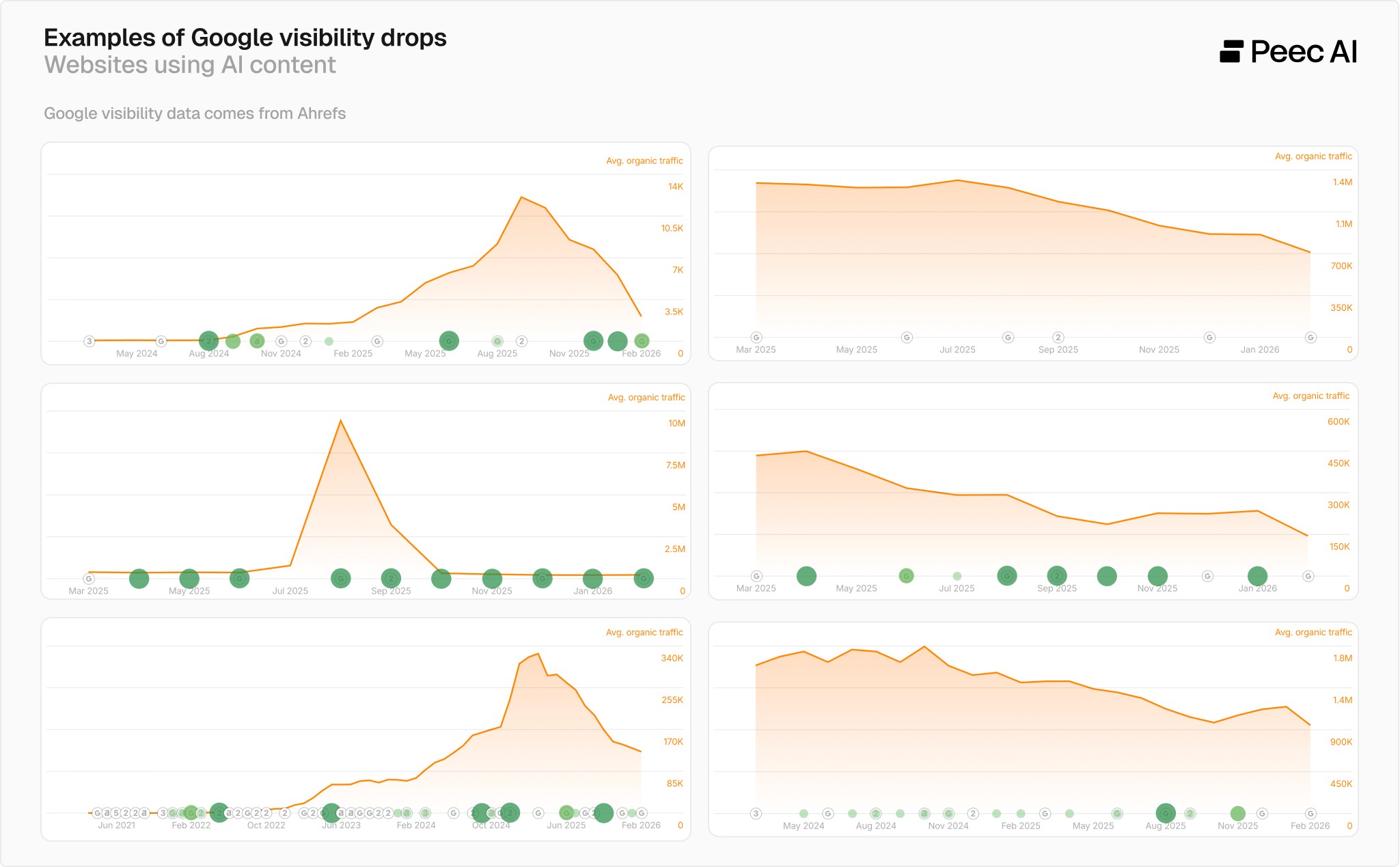

We looked at a portfolio of a few companies offering AI content generation and, no surprise, they commonly had massive visibility drops in Google.

Evidence shows that low-quality AI content can tank your online visibility. Google and Bing have advanced detection algorithms that can manually adjust rankings or completely remove websites from search results. Since large language models (LLMs) use these search engines during the research process (also known as grounding), it can have a negative impact on your visibility in LLMs.

Using AI as a writing assistant is usually fine. But publishing raw AI output at scale for your company blog without adding anything of value can backfire. I'm sharing this because I keep seeing tools promote AI content generation with no warnings about these risks.

This article is about what the data actually shows - and what to do to make your content useful for both users, SEO, and LLMs.

How AI content sites lose visibility

Sites with poor quality AI content follow a predictable path - they rank for a while, then they drop. Sometimes dramatically.

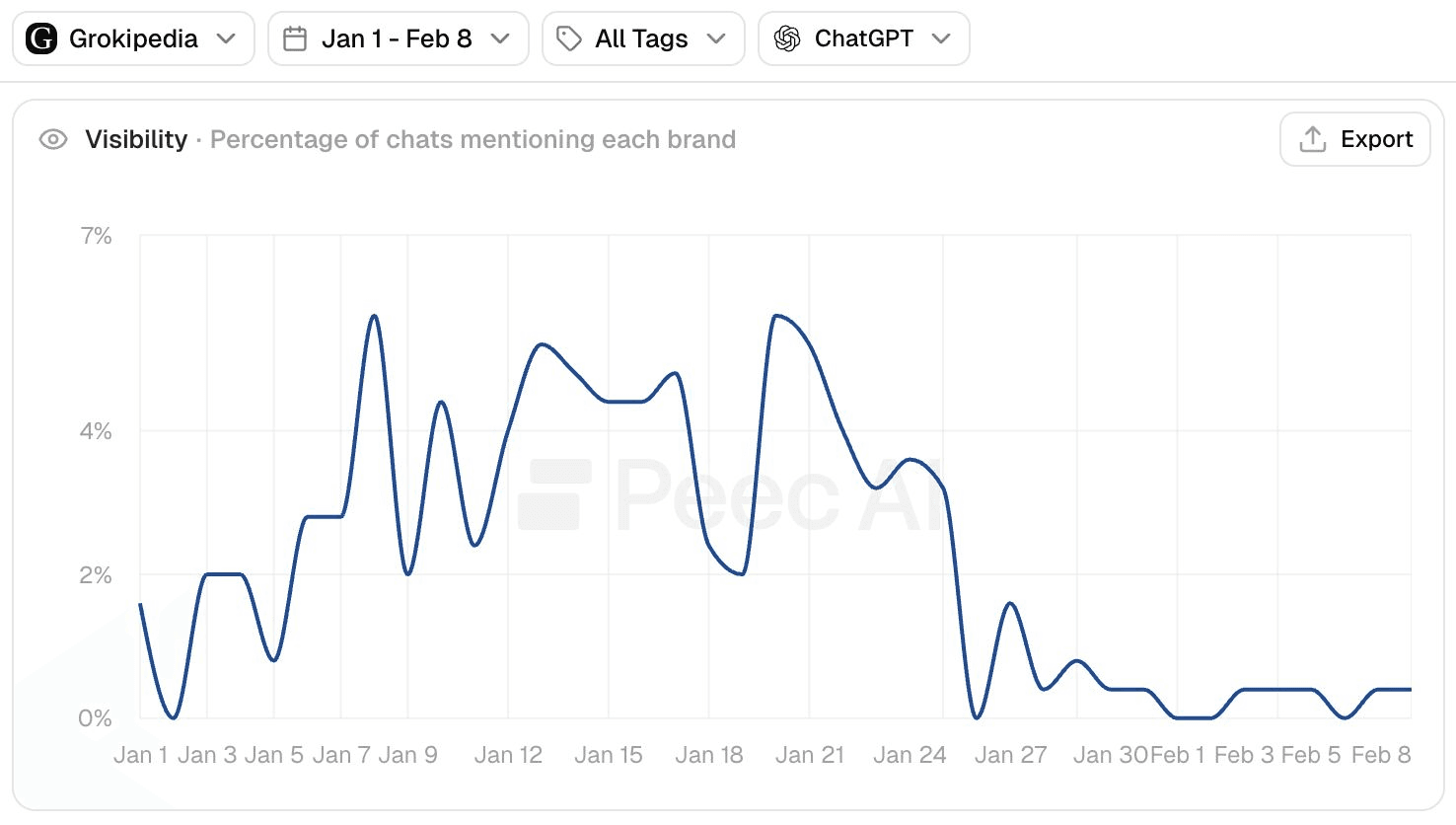

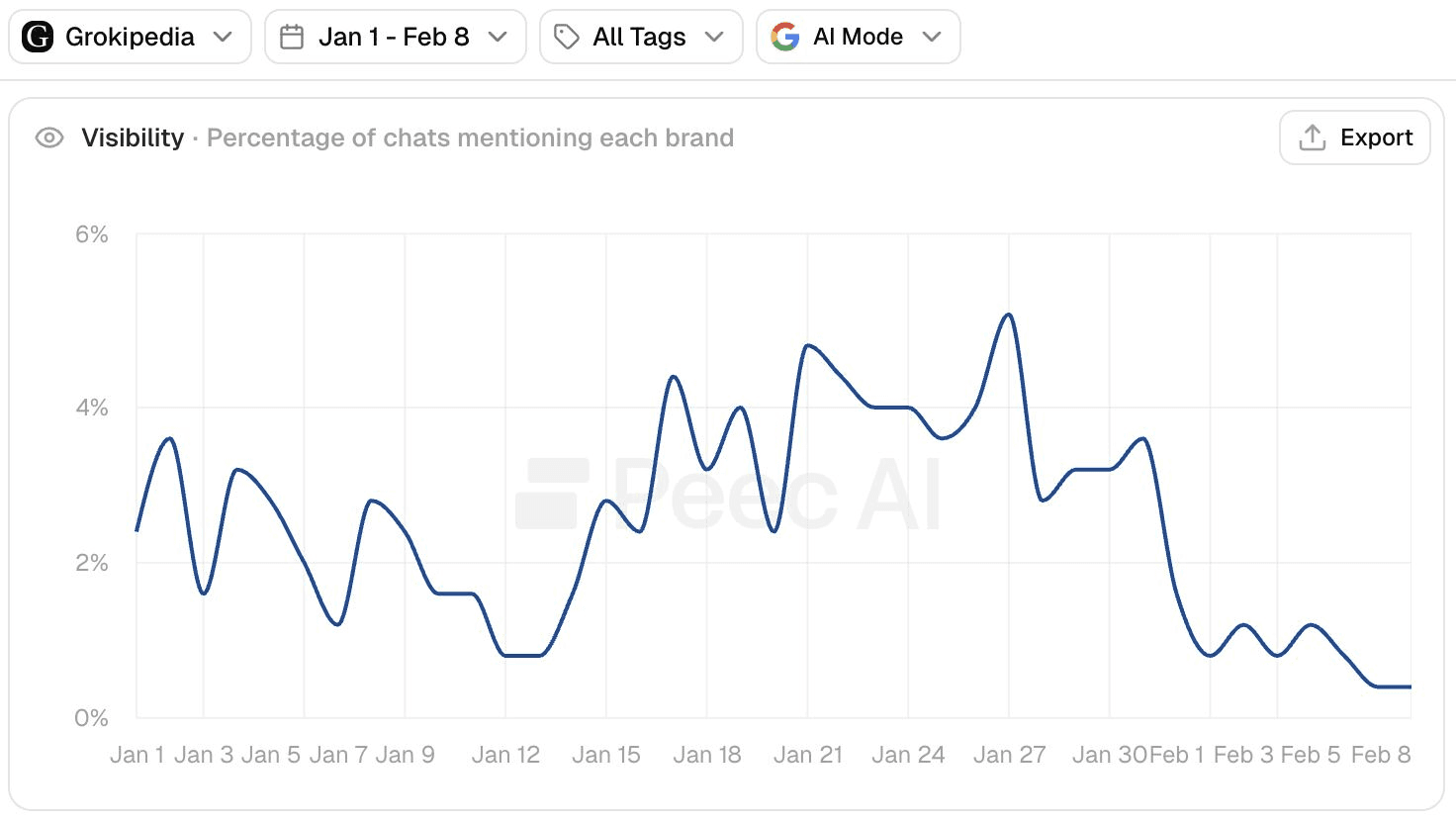

Take Grokipedia, an AI-generated version of Wikipedia powered by Grok, as an example. It gained traction, then started losing visibility in Google in late January / early February 2025. Multiple SEO experts like Lily Ray and Glenn Gabe documented the decline.

Additionally, Malte Landwehr, CMO at Peec AI, noticed something crucial on LinkedIn: answer engines started recommending Grokipedia less often at exactly the same time when Grokipedia lost visibility in Google.

He tracked this across ChatGPT, AI Mode, and AI Overviews. In the screenshot below, you can see all three answer engines reduced Grokipedia citations in late January / early February, which was the exact moment its Google rankings dropped.

Another example showing the risk of low quality AI content: in 2024, Google announced new spam policies causing multiple websites to be totally removed from the search engine. Originality.AI analyzed the aftermath and found that 100% of affected pages had AI-generated posts, with half of those sites having 80–90% of their content generated by AI.

Case after case, the same story.

The risk of using AI content generation tools

We took a close look at one of the most popular AI content generation tools that promise to help brands "win" in AI-powered search engines like ChatGPT, Perplexity, and Claude.

My colleague Malte Landwehr analyzed the tool and noticed that 36% of brands included in their success stories section have a typical Mount AI visibility/traffic trend in Google. Which means they had massive traffic drops.

And this isn't an isolated problem with one AI content generation tool. When I looked at other platforms, it was even worse: for one, 3 out of 4 brands (all major global names) featured on its website had suffered significant visibility losses. That's 75% of the clients they were actively using to market themselves.

Based on what we noticed, it’s pretty common that websites using AI content notice traffic drop in Google, as you can see in the collage below of several brands we analyzed:

The Google visibility charts are powered by Ahrefs, a widely used SEO tool.

Why losing Google rankings kills AI search visibility

You might be thinking, “OK, but this is just Google, my priority is ChatGPT.”

The Grokipedia case shows how when you lose rankings in Google, you don't just lose Google traffic but the impact carries over to AI search engines, too:

Traditional Google Search: Obvious impact

AI Overviews & AI Mode: Google's own AI features cite lower-ranked pages less often

ChatGPT: There's evidence ChatGPT uses Google Search extensively, including for product recommendations via Google Shopping

Grok: Also uses Google Search

One Google penalty, and suddenly you're invisible everywhere. That visibility loss creates other problems too.

Important considerations while creating AI content

1. Loss of user trust

This is the biggest risk, especially if you're an established brand. Users can quickly spot generic, low-effort AI content ( what many call "slop"), which immediately hurts your credibility. You lose one client's trust today, then another tomorrow, and it compounds.

You might rank #1 in Google and show up in ChatGPT responses, but when someone clicks through and sees your site is full of generic AI content, you've lost a potential customer and signaled to Google and Bing that your content isn't helpful.

The concerning part is that some companies are selling AI content generation (often very poor quality) as the solution to SEO and AI search visibility without mentioning the risks or explaining that it might actually hurt traditional search and AI search visibility.

2. Google can detect poor quality AI content

A lot of people think AI models now produce content so good that Google can't tell the difference between AI and human writing, but the evidence suggests otherwise.

Back in 2020-2021, Google published research where they tested AI detectors on 500 million web pages. They found that content flagged as machine-generated was consistently low quality - incoherent and poorly written. It seems very unlikely to me Google just stopped working on this.

One of the researchers from that study, Dara Bahri, is today a Research Scientist on the Gemini team at Google DeepMind, specifically working on detecting AI-generated content.

He recently published a research paper on detecting watermarked language models (2026). His research found that non-watermarked detection methods were actually more accurate than watermarked ones in some cases. He also discussed white-box approaches - methods that only model owners can use because they require direct access to the model's probability distributions. This suggests companies like ChatGPT, Google, Perplexity, and Claude can be more successful in detecting AI content if it’s generated by their own model.

Google actively fights low-quality content. It has AI systems like SpamBrain and 16,000 human contractors as part of the Google’s Search Quality Raters team that flags low-quality AI content.

In 2025, Google updated its Quality Rater Guidelines specifically to target "low-effort" AI content. Quality raters now mark mass-produced pages with no original content as "Lowest" quality, regardless of how they were created.

In machine learning, this is called the "golden set" - the benchmark Google uses to train and test its algorithms. Those 16,000 people manually create examples of good content versus bad content, which becomes the training data for Google’s automated systems.

3. Negative user signals

Even if we assumed Google couldn't detect low quality AI content directly - which seems pretty unlikely at this point - there's still user behavior to consider.

Google's antitrust trials revealed that it uses user signals. Meaning, when someone clicks through to your site, sees it has low-quality AI content, and bounces right back to Google, that's a clear signal something's wrong.

Bing has been open about using user signals for years. Google denied it for a long time, but its own patents and revelations from the antitrust trials confirmed it’s doing it too.

Every time a user bounces from your AI-generated page, you lose a potential customer and signal to Google and Bing that your content isn't helpful.

4. Site-wide quality impact

Google doesn't just judge individual pages. It looks at your content sections (like your blog) and your entire website.

If you mix low-quality AI content in with your high-quality work, you risk hurting your entire site through a penalty or when Google's algorithm re-evaluates your website’s overall quality.

I've seen this happen multiple times. A site ranks fine for months. Then, either a manual penalty hits or a core algorithm update reassesses the site. When it happens, it's usually sudden and the drop is severe.

5. Future algorithm changes

Google's Quality Rater Guidelines suggest the company is moving toward content with genuine human experience and expertise.

Since quality raters test algorithm changes, there's a strong likelihood that future algorithms will increasingly favor human-generated content with real insights.

Already, we see tons of evidence that Google wants to go in the direction of high quality content, including the Information Gain patent.

When can AI content be actually useful?

Not all AI content use is risky. One of the industry's most respected voices puts this in perspective.

Lily Ray is a long-time advocate for value-added content and setting the standard for E-E-A-T (Experience, Expertise, Authority, Trust). She shares:

AI can be incredibly useful for improving efficiency in the content creation process-from ideation and outlining to editing, summarizing, and refining language. But we've also seen repeatedly that overreliance on purely AI-generated content, especially when it's scaled quickly without meaningful originality, can seriously damage SEO performance (and ultimately visibility in AI-driven search as well). The key is ensuring AI-supported content still includes unique insights, firsthand expertise, original research, or unique perspectives and opinions that competitors can't also copy-paste from an LLM response.

This distinction matters. Using AI to help you write better is fine, but using it to replace your expertise and just publish at scale is where companies run into trouble.

How to use AI content safely

I don't think Google is going to penalize all AI content. Plenty of people use AI tools to improve their writing - making it clearer, tighter, and more engaging. Google gets that.

But low quality AI-generated content is something that Google actively fights against.

Here are the key principles if you're using AI for content:

Quality is everything. Google cares whether your content is good. Most raw AI output isn't good enough to be successful long-term.

Don't publish what AI gives you straight out of the box. Do your own research, add your perspective, and edit heavily. Make sure you're adding real value.

Bring your own insights. AI can't replicate your experience or unique perspective. That's what makes content valuable now.

Think of AI as your writing assistant, not your replacement. Use it to help you write better, not to write for you.

When I publish content, I ask myself two basic questions:

Is this article I would like to read myself?

Does the article add value for people who read it? (Unique research, another view on data, does it include things that most people miss?)

I strongly encourage you to ask the same questions before you publish content generated by AI. It will only take a couple of minutes per article. If you’re looking for more criterias, you’ll find Google’s guide on Creating helpful, reliable, people-first content useful.

My honest assessment on the future of AI content

Google will keep cracking down on low-quality AI content. The flood of AI spam has created real problems for search quality, and Google won’t ignore these issues.

Here's what I expect:

Google and major LLMs will get better at identifying low-quality AI content

Original perspectives will matter more than ever

Content that actually helps people will be rewarded

Will Google penalize all AI content? No, I don't think so. But you should stay away from low-quality content to protect:

Your Google rankings

Your visibility in AI search engines (ChatGPT, Perplexity, AI Overviews)

Your credibility and user trust