Citation rate may be your secret weapon

Part 1: URL/Source Gap Analysis

Part 2: Brand Mention Gap Analysis (coming soon)

TL;DR:

Using the average citation rate to find both high and low performing source citations is an incredibly powerful technique in AI search. Set up a well-tagged prompt tracking project and filter citation sources for your domain. Next, filter for both the most and least cited URLs and use these learnings to inform your content strategy.

This guide will show you how to strategically identify gaps where large language models (LLMs) like ChatGPT aren’t citing your content and identify which pieces have the highest potential. It's designed for agencies, online publications, and journalistic outlets looking to demonstrate success with getting content cited by AI, requiring no technical experience.

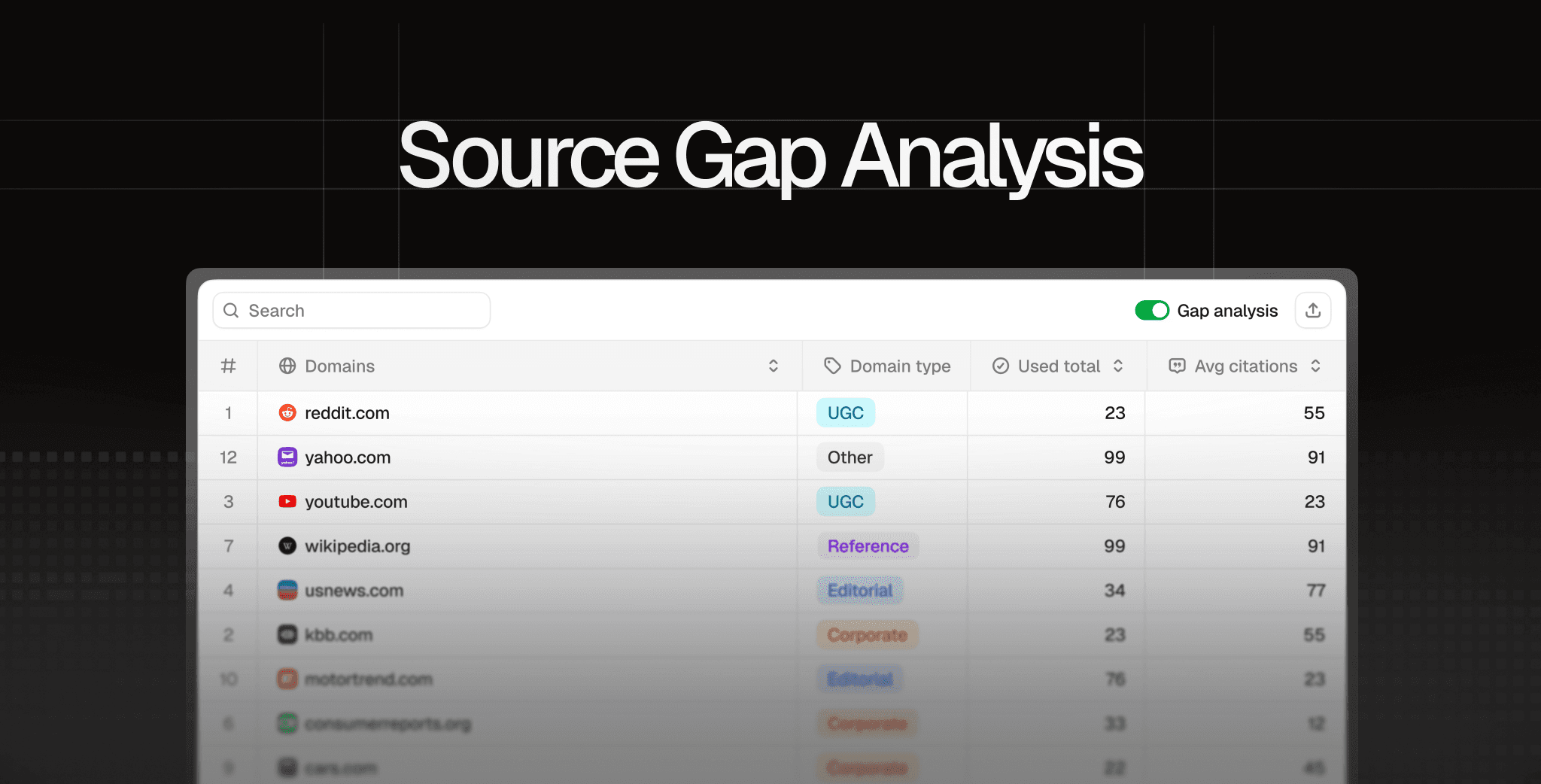

The method used in this guide works with any AI search tracking platform, like Peec AI, that shows citation data and source performance.

Why are AI citations important?

A recent study from Eight Oh Two claimed that 37% of users now start their purchase journey with AI search, as reported in Search Engine Land.

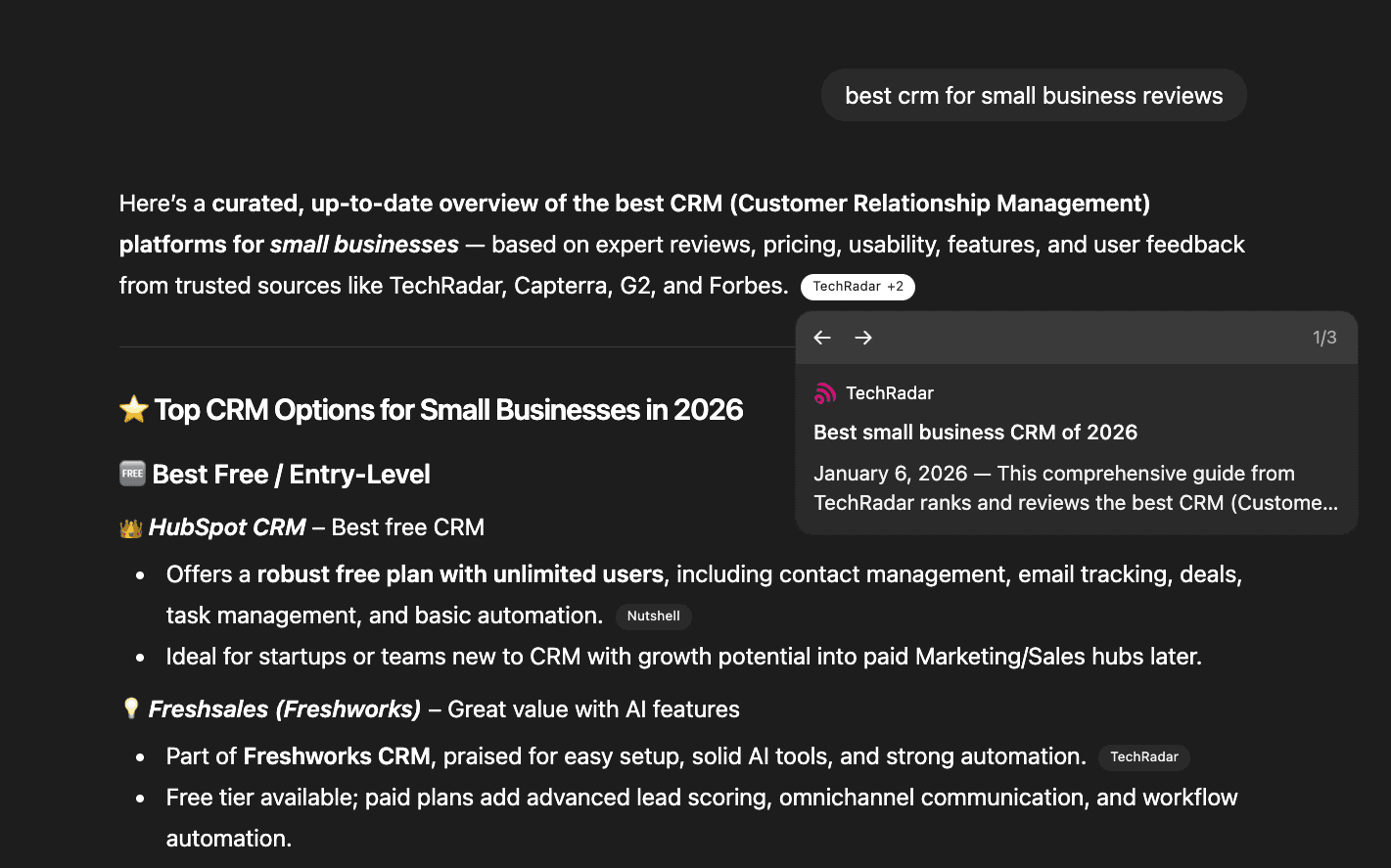

In practice, that might look like this. A user asks "best CRM software for small business" and ChatGPT responds with recommendations. If ChatGPT clearly cites TechRadar, perhaps even multiple times, that builds brand recognition in the user’s mind. Even if they don’t click the citation directly, they’re likely to visit TechRadar later to read the full reviews or explore more options.

But they would only know to take this step if TechRadar is clearly cited. That’s why for online publications, journals, and review sites, pursuing a citations-first strategy makes sense.

A brief refresher of how AI ranks URLs

Understanding how AI models choose sources helps explain why some content gets cited and others don't. Here's the simplified process:

Searches for relevant content: When you ask a question, the AI searches Google for related articles this process creates what's called "query fan-outs").

Gathers potential sources: It collects the most relevant articles it finds.

Ranks by quality signals: It evaluates each source based on factors like URL authority, content snippets, and page descriptions.

Selects final sources: It chooses which articles to actually read and potentially cite.

Creates the answer: It compiles information and decides which sources to mention in its response.

The four buckets of source performance

To understand where your content stands in AI search, it helps to think of all sources as falling into four buckets:

Content not retrieved: Your articles don't appear in the AI's initial search results at all

Content retrieved but not cited: Your articles are found and reviewed, but the AI chooses not to reference them in its answer

Content retrieved but not cited often: Your articles occasionally get cited, but inconsistently across similar queries

Content retrieved and cited often: Your articles are both found and frequently referenced in AI answers

Each bucket represents a different challenge. If you're in bucket 1, you need better SEO fundamentals. If you're in bucket 4, you're doing great.

But bucket 3 is where citation gap analysis becomes most valuable, because your content is already good enough to be considered, but something is preventing consistent citations.

Today's focus: Bucket 3

Today we will focus on bucket 3: URLs that AI models are already retrieving and reviewing, but not citing consistently. If LLMs are already retrieving them, we need to investigate why they are not actually being cited, or not cited very often.

Key definitions

The two key definitions we’ll use in this guide are:

Sources: All of the URLs retrieved by an LLM for a particular prompt.

Citations: At Peec AI, we define citations as URLs that were retrieved and used in the answer.

Below we see an example of TechRadar being cited in ChatGPT. The source is clearly used in the answer text:

Getting set up

You'll need a prompt tracking project, ideally with high-quality, well-tagged prompts. If you don’t have any prompts added to your project or want to improve existing ones, check out our extensive prompt selection guide.

Today, we will look at a test project in the SaaS space from the perspective of an online magazine trying to increase its presence in AI answer sources.

Step 1: Look at the big picture

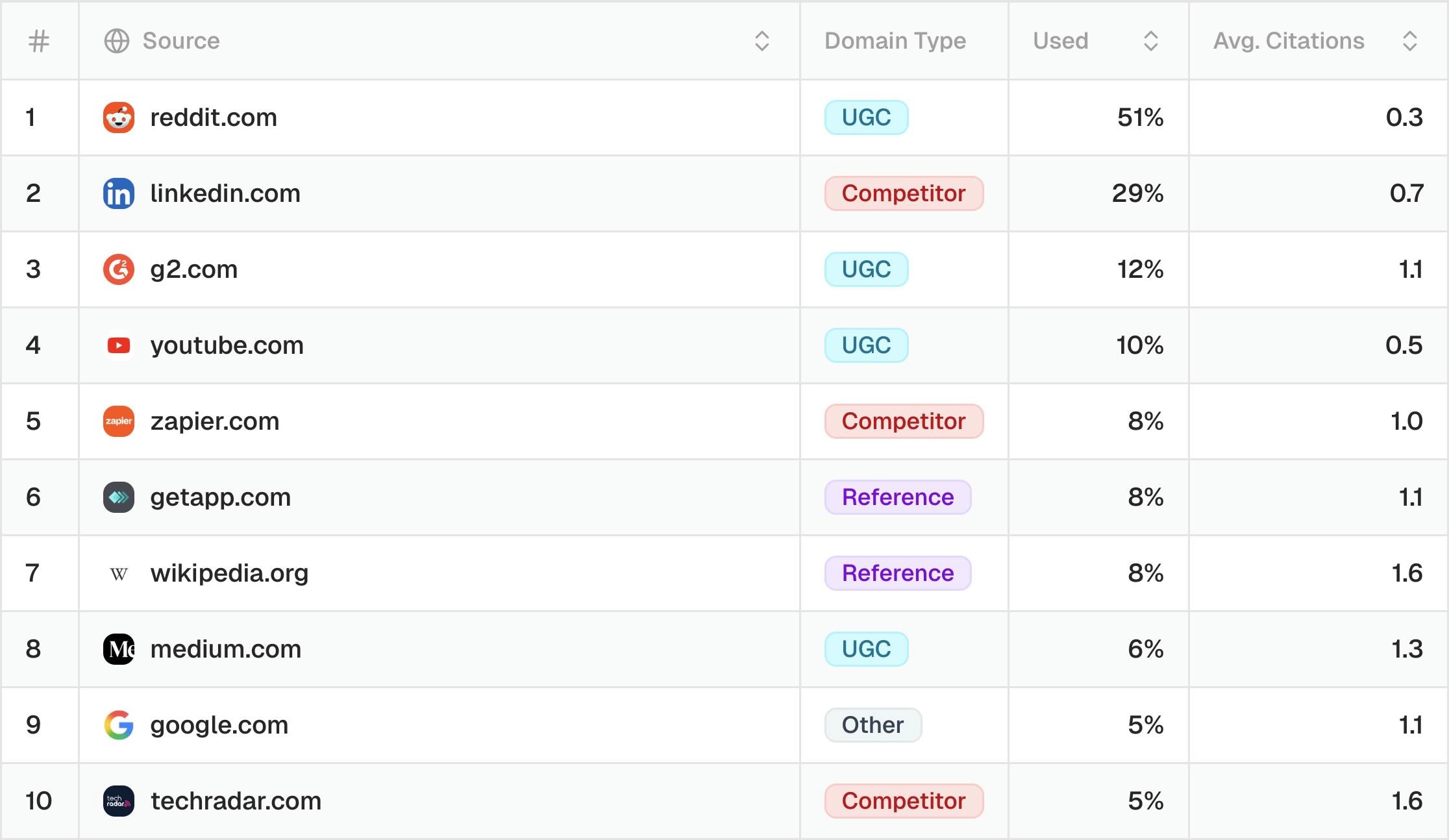

Looking at the data, you want to identify which domains consistently appear in AI answers (high "Used" percentage) and get cited frequently (high "Avg. Citations"). This shows you who your real competitors are in AI search.

From the perspective of TechRadar, we can see that:

In terms of review sites, G2 and GetApp.com are very influential

In terms of UGC, Reddit, YouTube, and LinkedIn are influential

For journalistic and listicle content, TechRadar is up against Zapier and PCMag

What is the average citation rate?

The average citation rate tells us how often a domain is used in the AI answer on average. Using our TechRadar example above, we can see that for Reddit, it's just 0.3 across AI models.

This is because AI search often retrieves two to three Reddit URLs but only uses one of them in the answer. The citation rate is something that is model-specific and requires some analysis because different models cite content in different ways.

Here are the general benchmarks for strong citation performance across models:

ChatGTP: Average citation rate > 2.5

Google AI Mode: Average > 1.2

Perplexity: Average citation rate 0.5 (Perplexity is much more stingy at explicitly citing content than other AI models)

The power of tagging your prompts

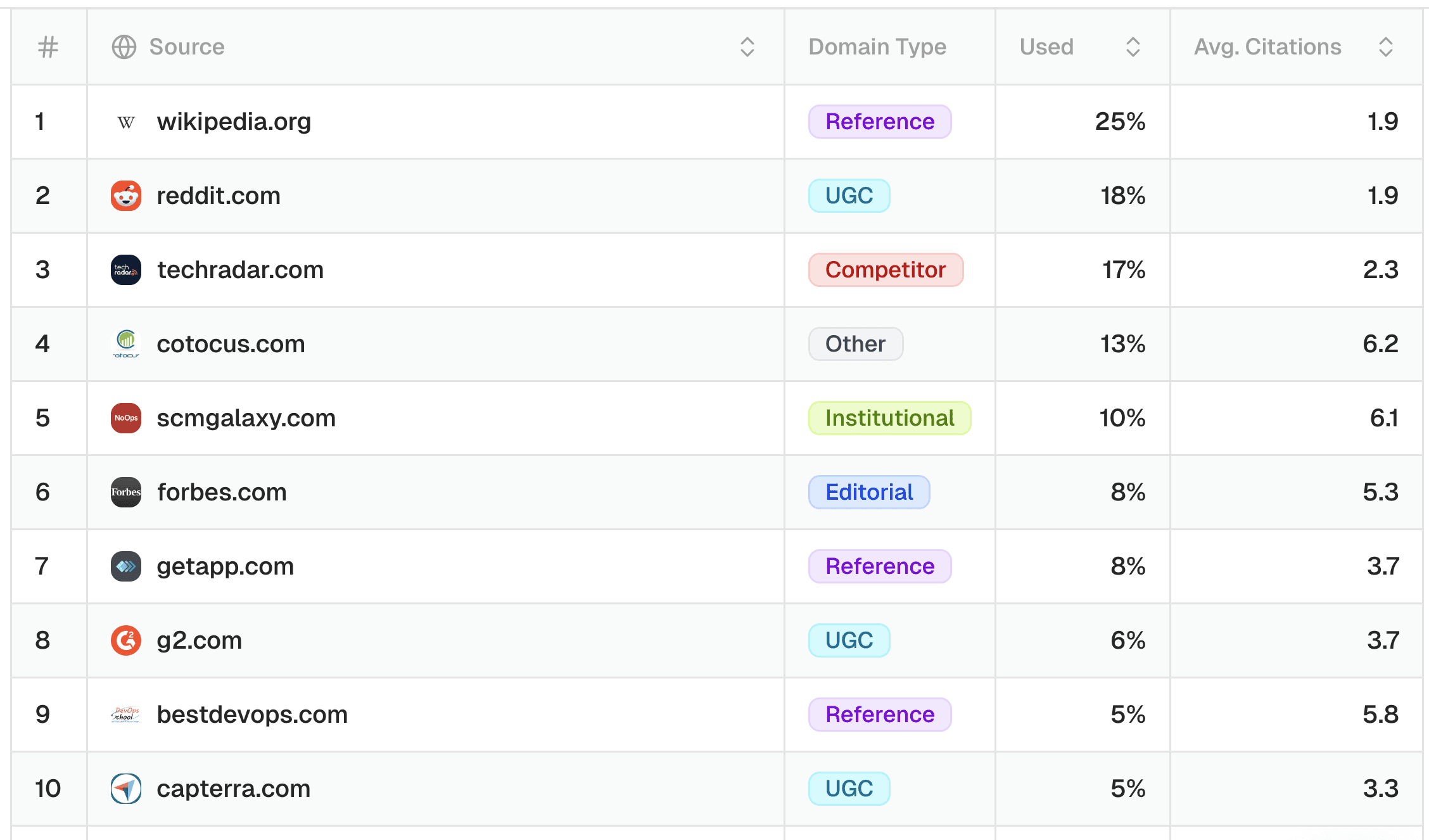

Now that we've seen TechRadar's overall performance, let's dig deeper using prompt tagging. When you set up your own tracking, you'll want to tag prompts not just by category but also by intent. This lets you analyze performance for the queries that matter most to your business.

For this project, I've tagged prompts by intent: informational, navigational/local, commercial, and transactional.

For now, we will filter on commercial and transactional intent for the model ChatGPT. These prompts are particularly valuable as they include product consideration and purchase intent.

We can see that TechRadar jumps to number three as a source, but we can also see it has one of the lower average citation rates compared to other domains.

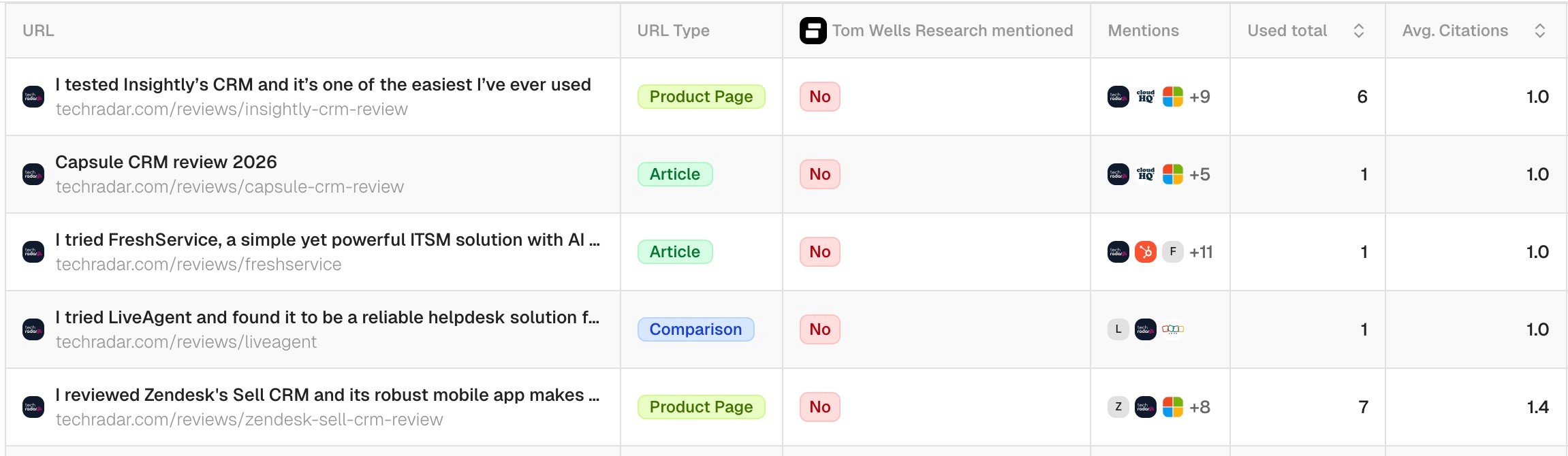

Step 2: Examine retrieved content by citation frequency

Now let's look at individual pieces of content to see which URLs are being retrieved but not cited often.

In a platform like Peec AI, you can view an overview of URL sources and filter by average citation rate. In the screenshot below we see articles that are retrieved but have an average citation rate of 1.0.

While 1.0 might seem fine, it’s actually the baseline - most sources that appear in ChatGPT get cited at least once. Content that ChatGPT really values typically has higher average citation rates.

We can quickly see that many of these lower-cited articles are reviews for individual products or software. ChatGPT (as many LLMs) prefers content-rich pages, often covering multiple product reviews on a single page.

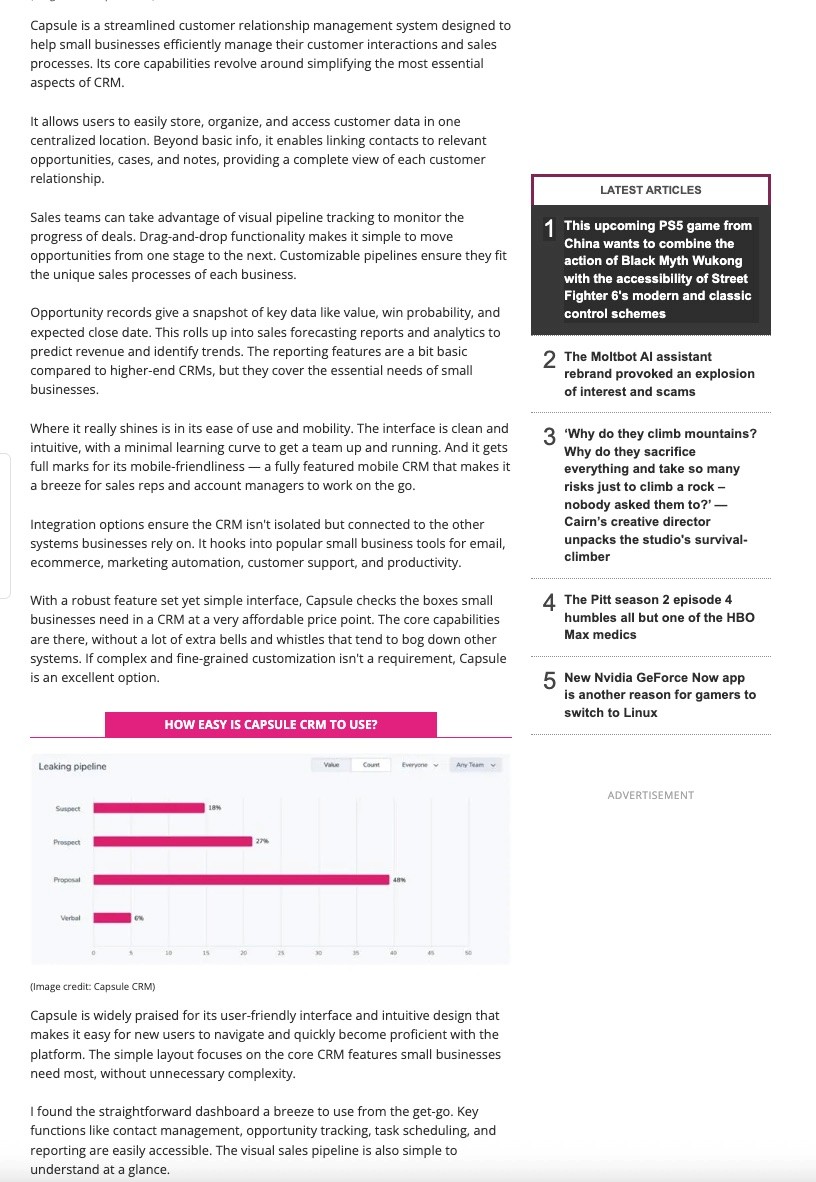

The formatting also matters. Many of these review articles lack clear headings and use long text blocks, making them harder for LLMs to parse than well-structured, organized content.

Example: A TechRadar review with almost no headings. LLMs prefer clear content chunks and structure.

Let’s move on to high citation frequency content.

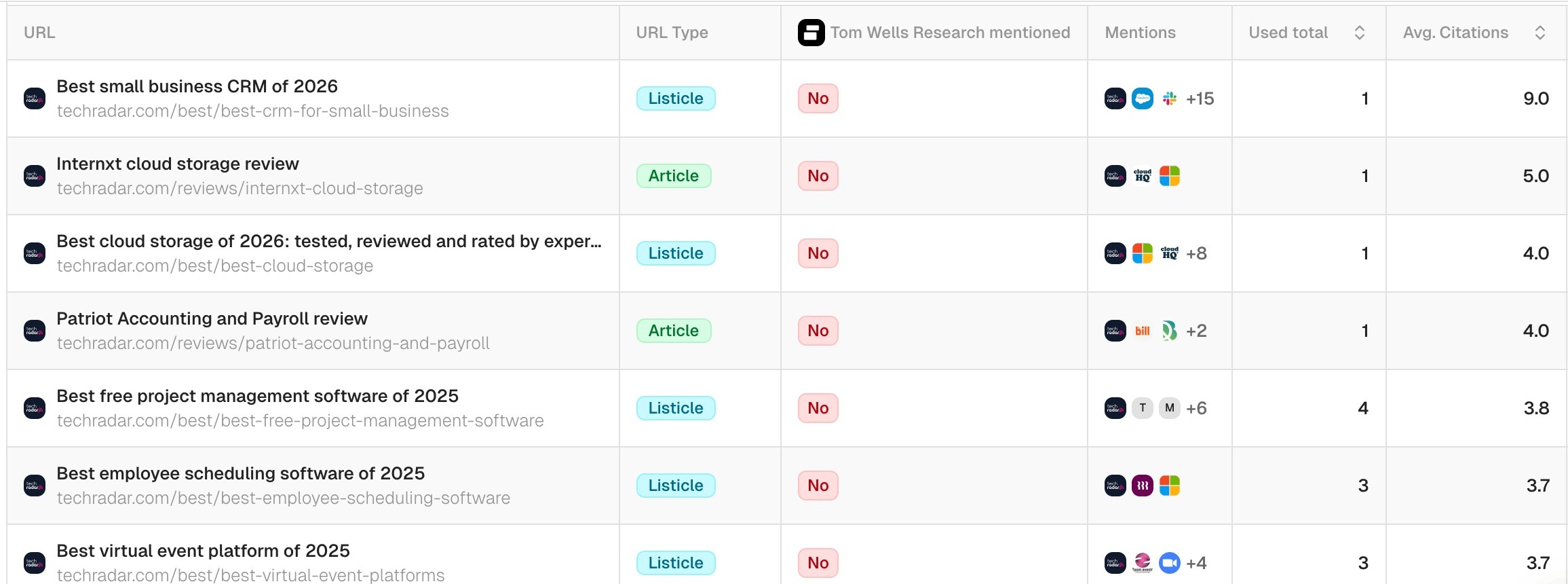

Step 3: Examine highly-cited content and adapt your strategy

Now let's look at what content AI likes to cite most often. We’ll filter for TechRadar URLs with the highest average citation rates. Remember: the better you tag your prompts for intent and category, the more granular insights you'll get.

Since we’re still focusing on commercial and transactional prompts in ChatGPT - the queries where users are researching or ready to buy - these insights directly impact TechRadar’s revenue-generating traffic, and the company would benefit from increasing its citations in AI search.

Using Peec AI, we can see at a glance that ChatGPT has a strong preference for listicles. This is because LLMs can retrieve information about multiple products at once from a single source.

What this means for your content strategy

Now that we can see which content types perform best, we can reverse-engineer a more effective approach. The gap between TechRadar's single product reviews (low citation rates) and their listicles (high citation rates) shows exactly where to focus optimization efforts.

This same pattern applies to your content. If you're seeing inconsistent citations, look for the divide between comprehensive content and individual pieces.

Recommendations for the content team

Based on this citation gap analysis, here’s how to action these insights. While we’re using TechRadar as our example, these principles apply to any publication seeing similar patterns in their citation data:

Redraft these single product review content with clear, structured formatting: lists, bullets, and proper use of H tags.

Add a summary of key findings to the top of each page.

Link to a hub page that compiles a summary of the key findings from each piece, ensuring the pillar page is well formatted.

Combine multiple single review pages into “top 5 CRM tools” style listicles.

Track the effects over time by checking for an uplift in citation rate in your tracking software.

Key takeaways

We learned how to use average citation rate to quickly identify content pieces that are being retrieved but not often cited. This citation gap analysis shows exactly where to focus your optimization efforts and helps inform your AI content strategy.

Coming next: We’ll dive deeper, looking at how to detect prompts you are not being retrieved for, as well as learn more about brand mention gap analysis.