TL;DR

AI search teams often don’t know what metrics to track and what benchmarks to use. The space is so new that very few standards have been developed.

We wanted to look at what’s a good citation rate, i.e., how often top-performing content is cited in AI answers across ChatGPT, Google AI Mode, and Perplexity.

This study of over 1 million citations will provide you with concrete benchmarks and guidance that you can start using today to improve your AI analytics.

But first: Citations vs. retrievals

This is one of the most confusing parts of AI search. So, what is a retrieval and what is a citation?

A retrieval is when a webpage is retrieved by a large language model (LLM). It makes it into the list of candidates that the model might use to generate its answer.

A citation is when that webpage is actually used and clearly referenced in the AI answer.

The relationship between these two metrics is the citation rate.

For brands who want their page to be clearly referenced, such as online publications, making sure your content is actually used (cited) and not just retrieved is important for any AI visibility strategy.

To visualize this, let's look at a few example screenshots:

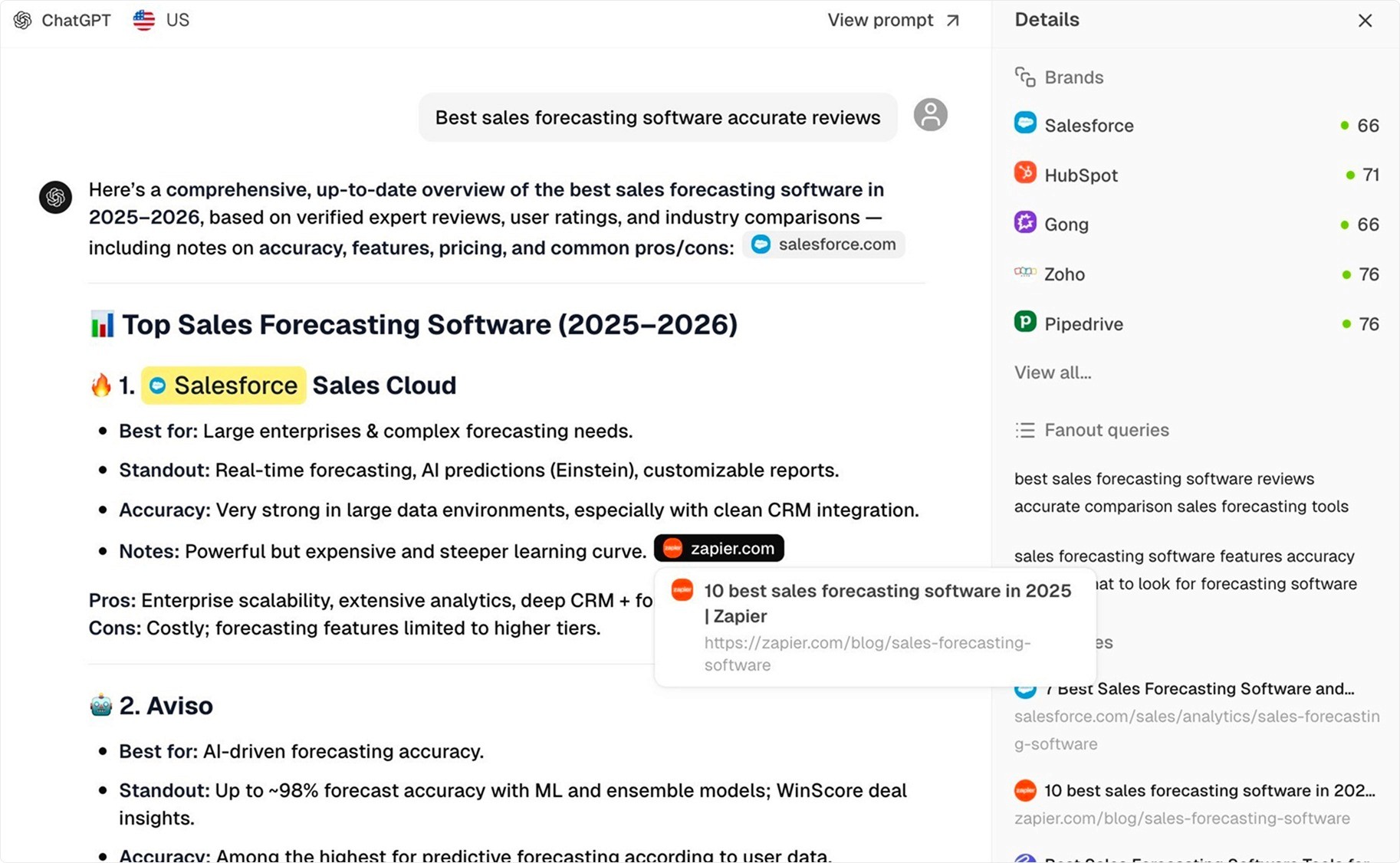

ChatGPT citation example

Below we see a Zapier article clearly cited in the ChatGPT response. But there are also sources that are retrieved but not cited in the answer.

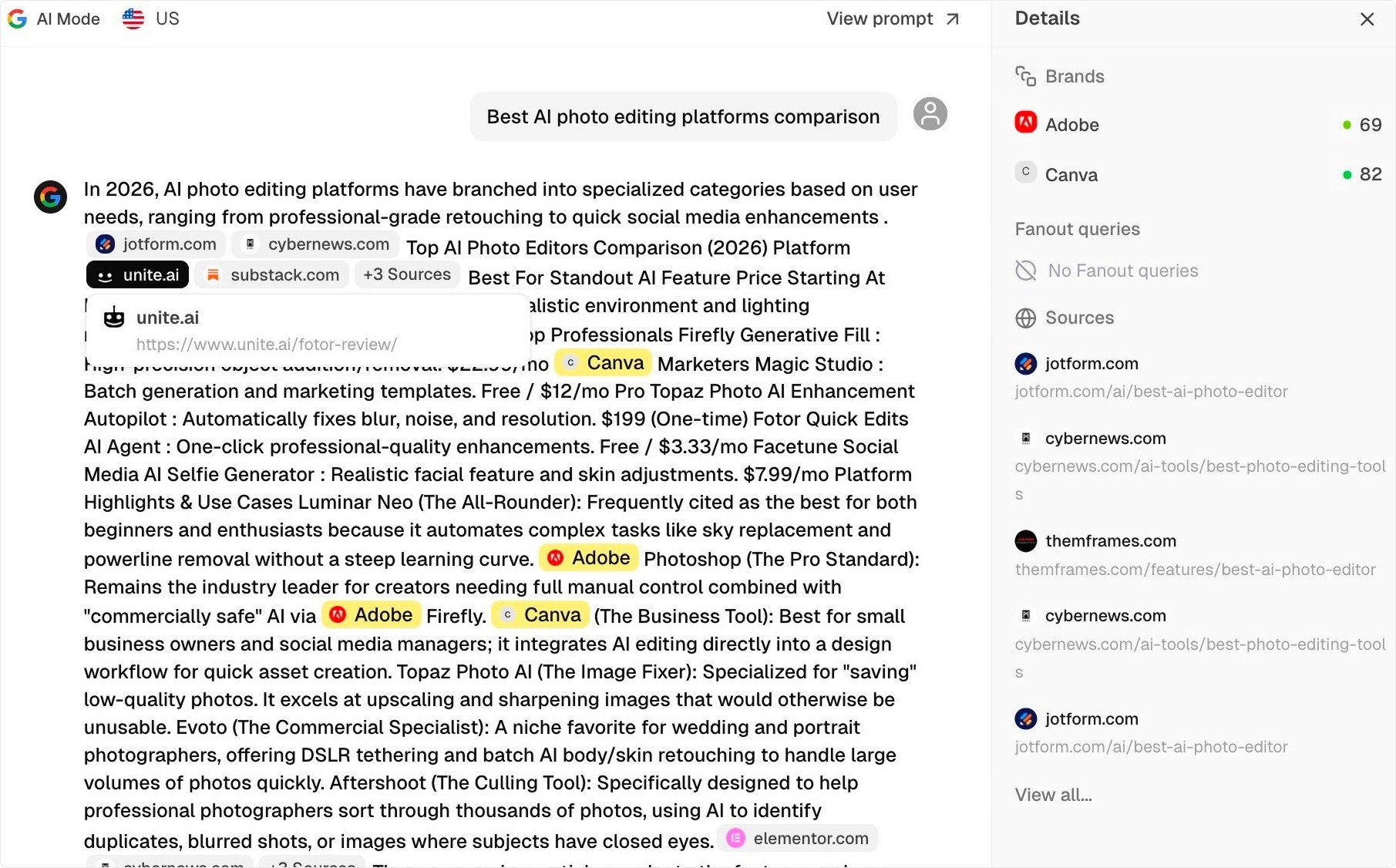

Google AI Mode citation example

For Google AI Mode, we see an article sourced from the domain unite.ai. Note that each AI model cites sources slightly differently but for this study we are interested in what sources are used rather than what brands are mentioned.

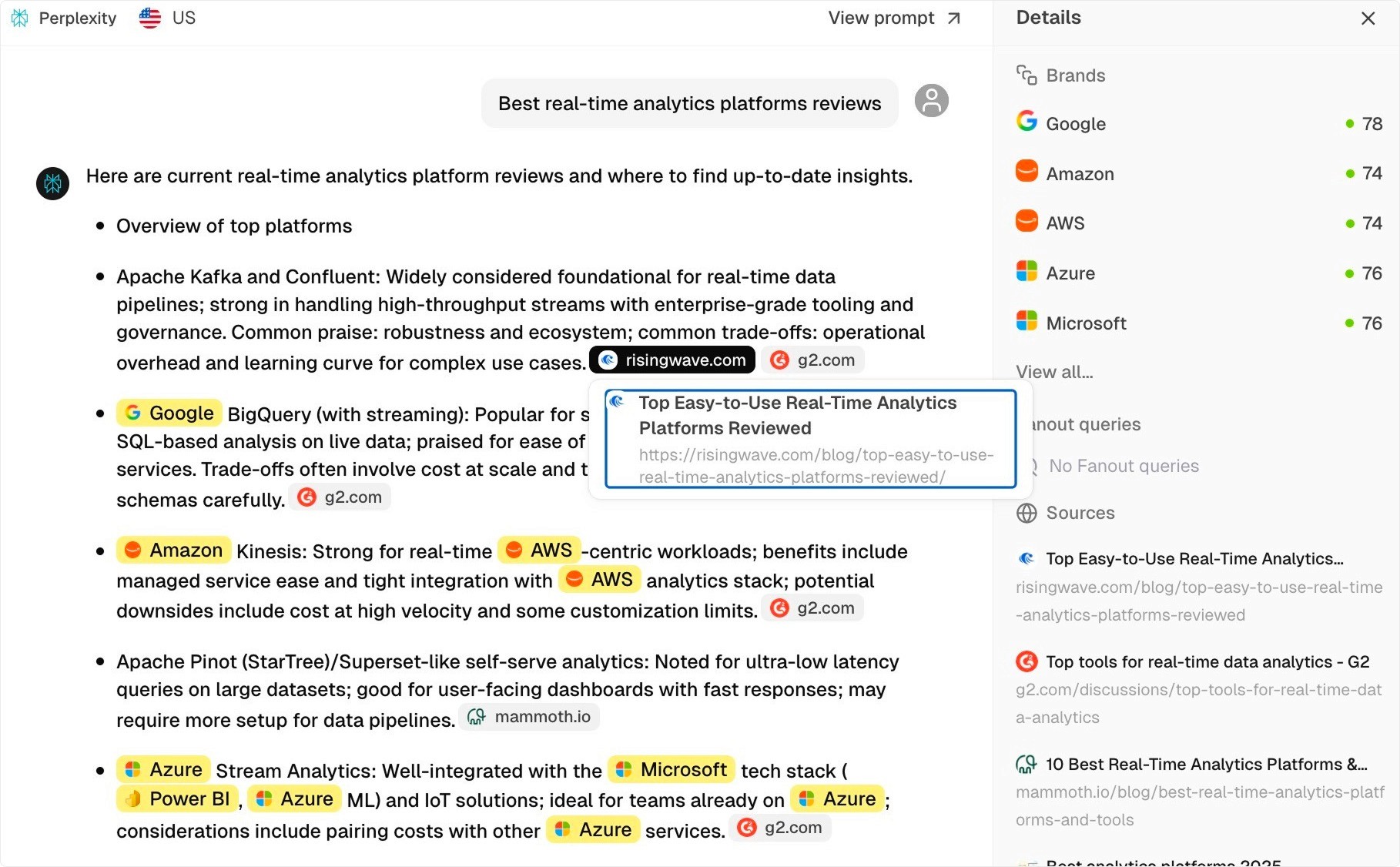

Perplexity citation example

For Perplexity, we see a source from domain risingwave.com. Visually you may also notice that there appear to be fewer citations in the Perplexity response, which will be a common thread in this study.

A concrete example

One problem we have seen is the lack of good benchmarks for what citation rate to aim for. What is good? What is bad? And how do you even measure it?

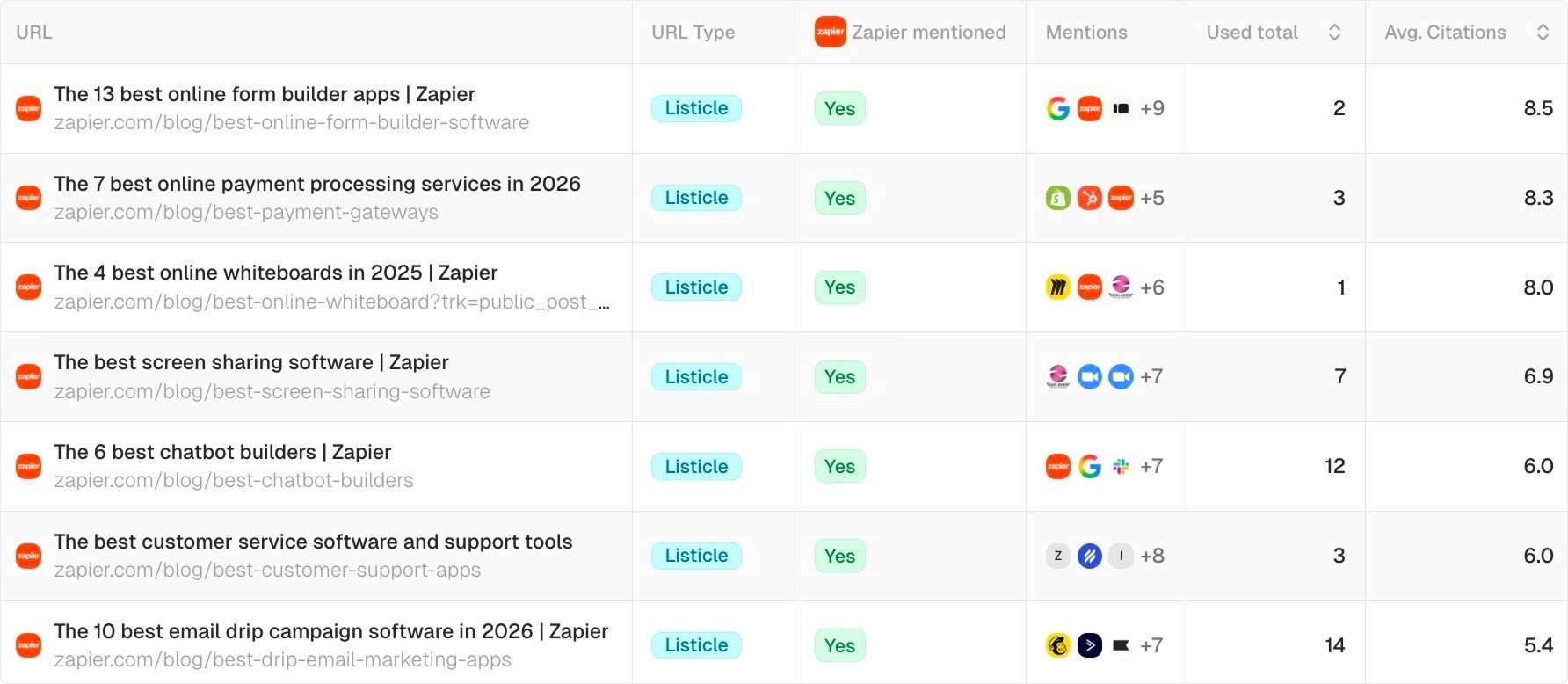

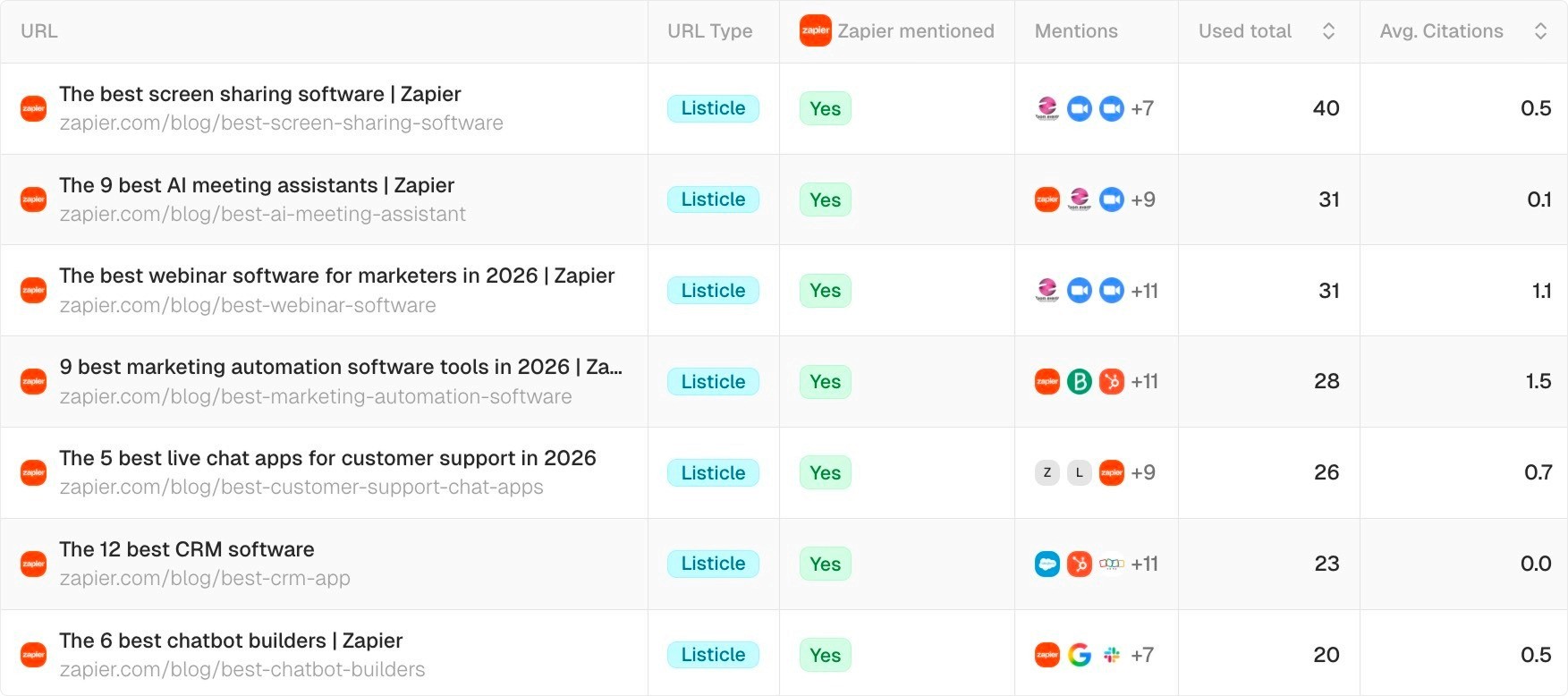

Let’s first look at a real example in the Peec AI platform to illustrate the point. The column “Used total” refers to how many times the URL was retrieved and “Avg. Citations” shows on average how often it was used per AI answer.

Below, we see some high-performing Zapier “best of” sources in ChatGPT with extremely high citation rates:

Now, let’s compare the same Zapier source URLs in Perplexity. We see that in general average citation rates are much lower:

To fully understand the intricacies of AI citation rates, we will dive into an analysis of over 1 million of them. Let’s get started.

What is a good citation rate?

To give your teams some easy-to-understand benchmarks, we looked at over 1 million citations from a harmonized dataset.

Harmonized simply means evenly split by query intent, and all the prompts are non-branded. The aim is to provide a balanced view of citation rates.

We look at the following factors:

AI model: Do citation rates change depending on the model?

Prompt intent: Does citation rate vary based on query intent?

Content type: Do LLMs cite certain content types more than others?

ChatGPT: Turns out the “G” stands for generous

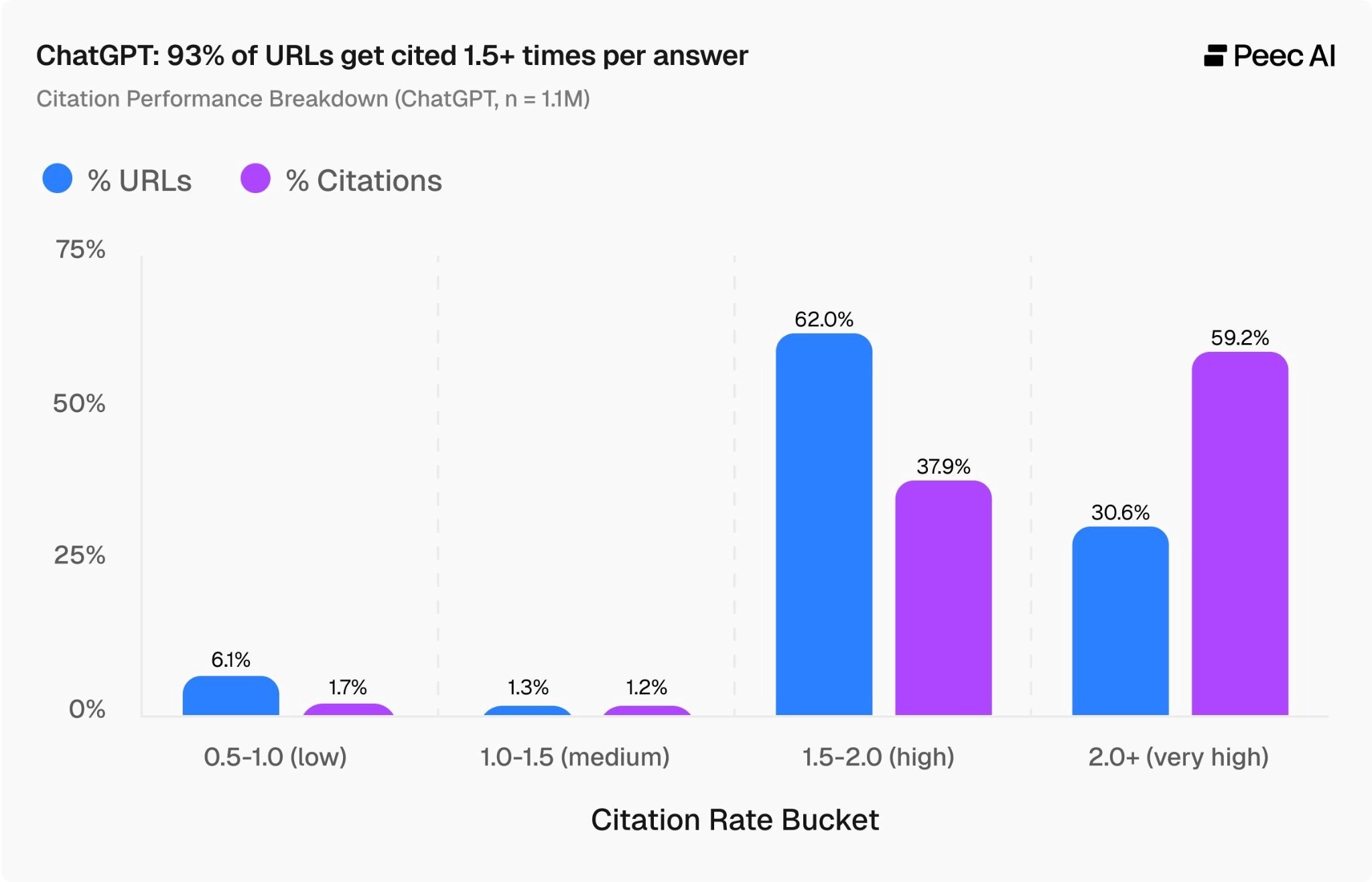

The chart below tells us how many webpages in the dataset land in each citation rate bucket. In the case of the 2.0 bucket, this means on average this URL was cited twice per every AI answer. This will be our “very high” citation bucket.

Starting from the high citation rate bucket (2.0+), we can see that 31% of URLs fall into this category.

In other words, almost a third of URLs across the entire dataset are cited more than twice per AI answer by ChatGPT. These URLs make up 59% of all citations.

What this means: ChatGPT is generous with citations across the board, but the top-cited pages see disproportionately high AI visibility.

For this reason, we recommend AI teams set a benchmark citation rate of 2.0+ for ChatGPT.

Google AI Mode: Does not show favoritism

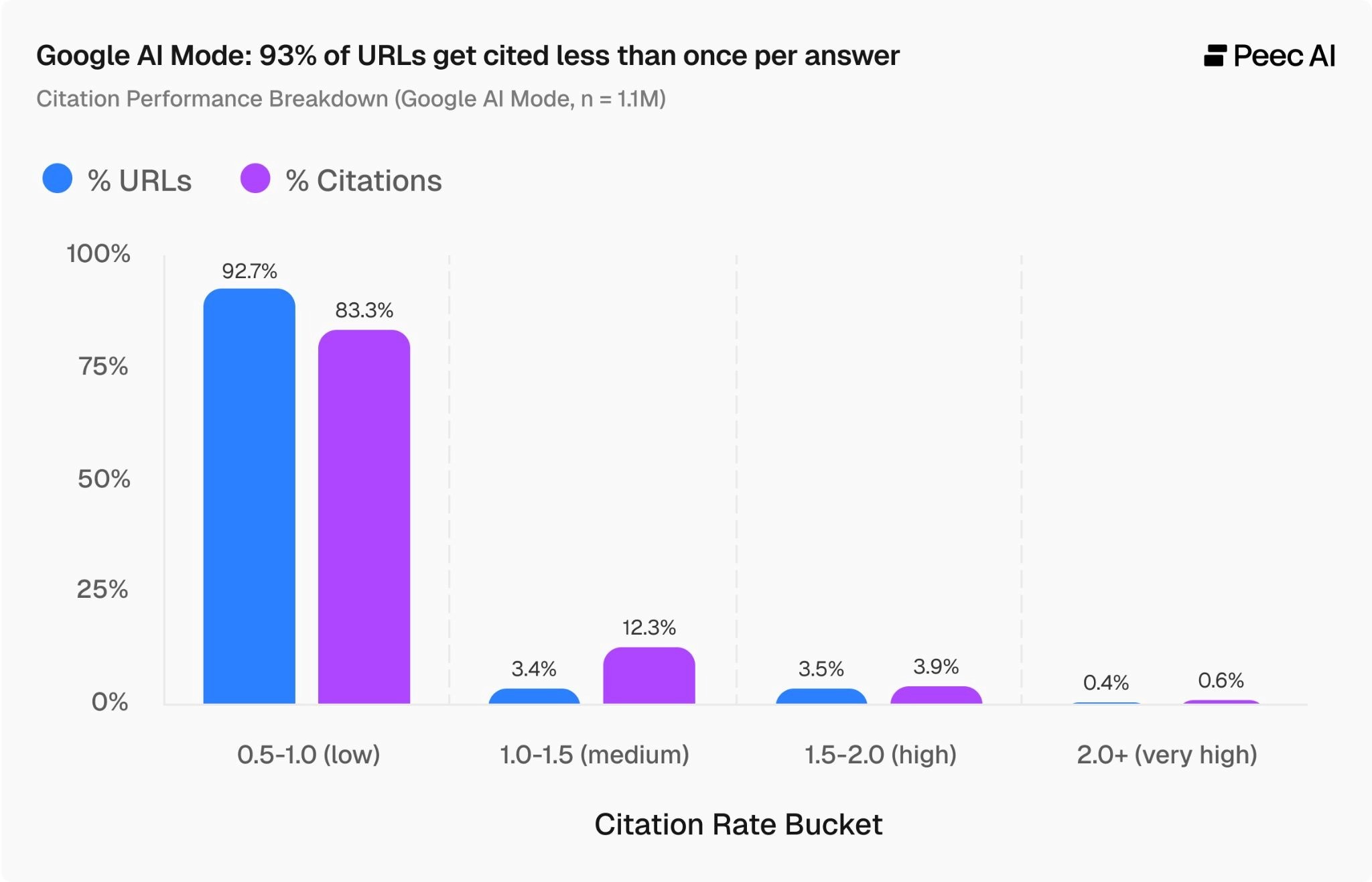

Now let's look at Google AI Mode. The picture is significantly different. We see almost all URLs cluster in the low citation rate bucket on the left of the chart. In simple terms, more than 9 out of 10 URLs have a citation rate below 1.0.

Google AI Mode is much more consistent than ChatGPT, with a narrower range of citation rates overall.

This means most URLs are cited less often than they are retrieved. There is a small uptick in citations in the 1.0–1.5 category, with only 3% of URLs making up 12% of citations.

This is the sweet spot to aim for, so we recommend AI visibility teams target a citation rate of 1.1–1.5. Above 1.5 would be exceptional, but we want to offer a fair target to aim for.

Perplexity: A tale of two extremes

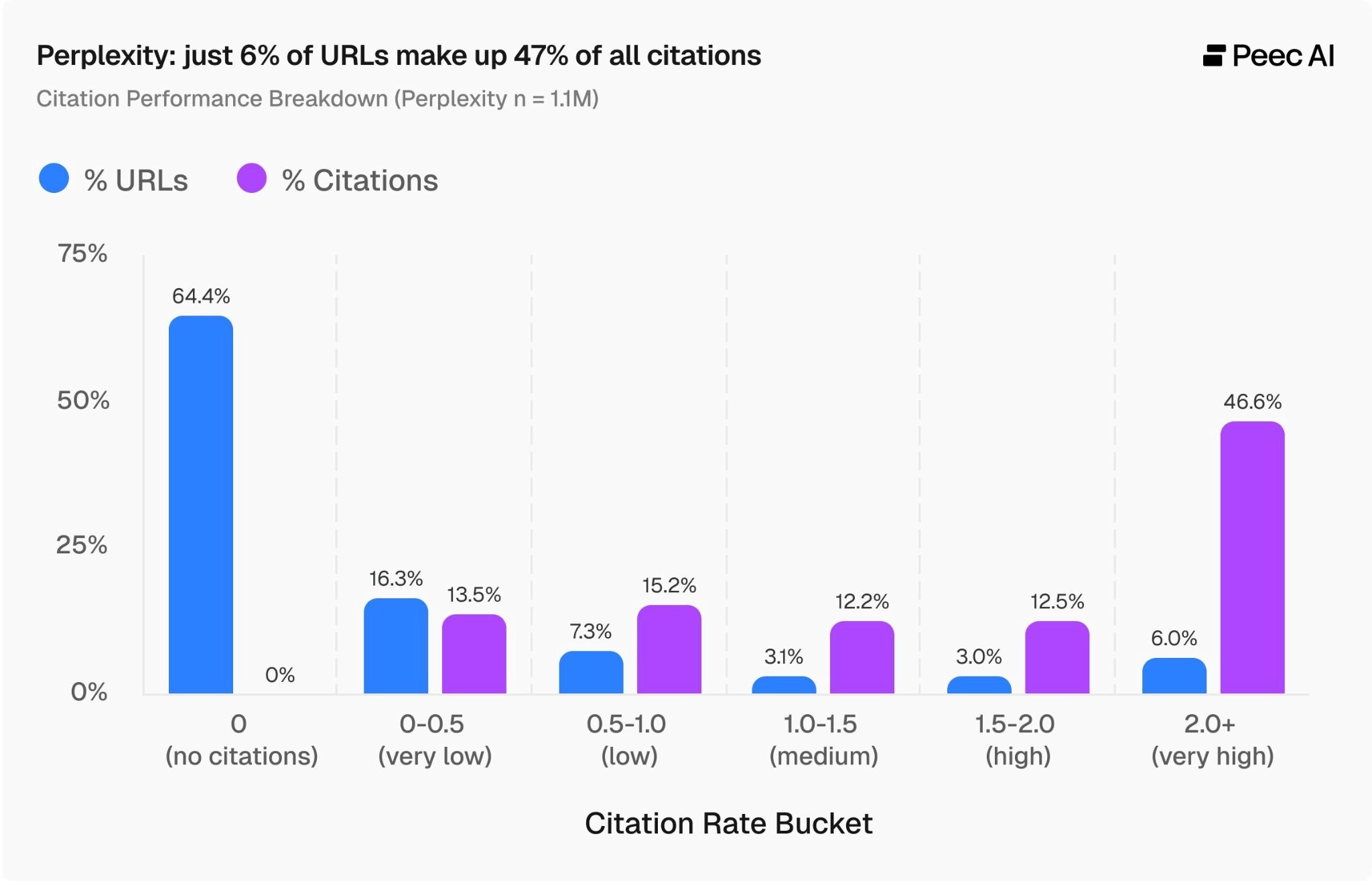

On to Perplexity. The chart below shows a tale of two sides.

On the left side, we see that a staggering 64% of URLs never get cited. We should note that Perplexity does show these sources in the sidebar, but is much less likely to cite them in the actual AI answers. To visually display this, we added the 0 citation rate and 0-0.5 buckets for Perplexity.

On the right side of the chart, we see that just 6% of URLs fall in the high citation rate category of 2.0+, yet they make up just under half of all citations. This shows that Perplexity strongly favors a select few URLs.

For this reason, we suggest a Perplexity citation rate benchmark of 1.5–2.0. If you hit this citation rate, you are doing well.

With such a significant portion of content falling in the low and zero citation range, we recommend cross-checking your data with ChatGPT and Google AI Mode, because it may well be that your content is already successful on those AI channels.

Prompt intents

Next, let's briefly look at how query intents affect citation rates.

The four prompt intents we analyzed are:

Informational: The user wants to learn something. ("How does solar energy work?")

Navigational / Local: The user is looking for a specific website or nearby place. ("Coffee shop near me," "bank login page")

Commercial: The user is researching options before a purchase. ("Best running shoes 2025," "high performance laptop comparison")

Transactional: The user is ready to take action, usually to buy something. ("Buy running shoes online," "subscribe to streaming service")

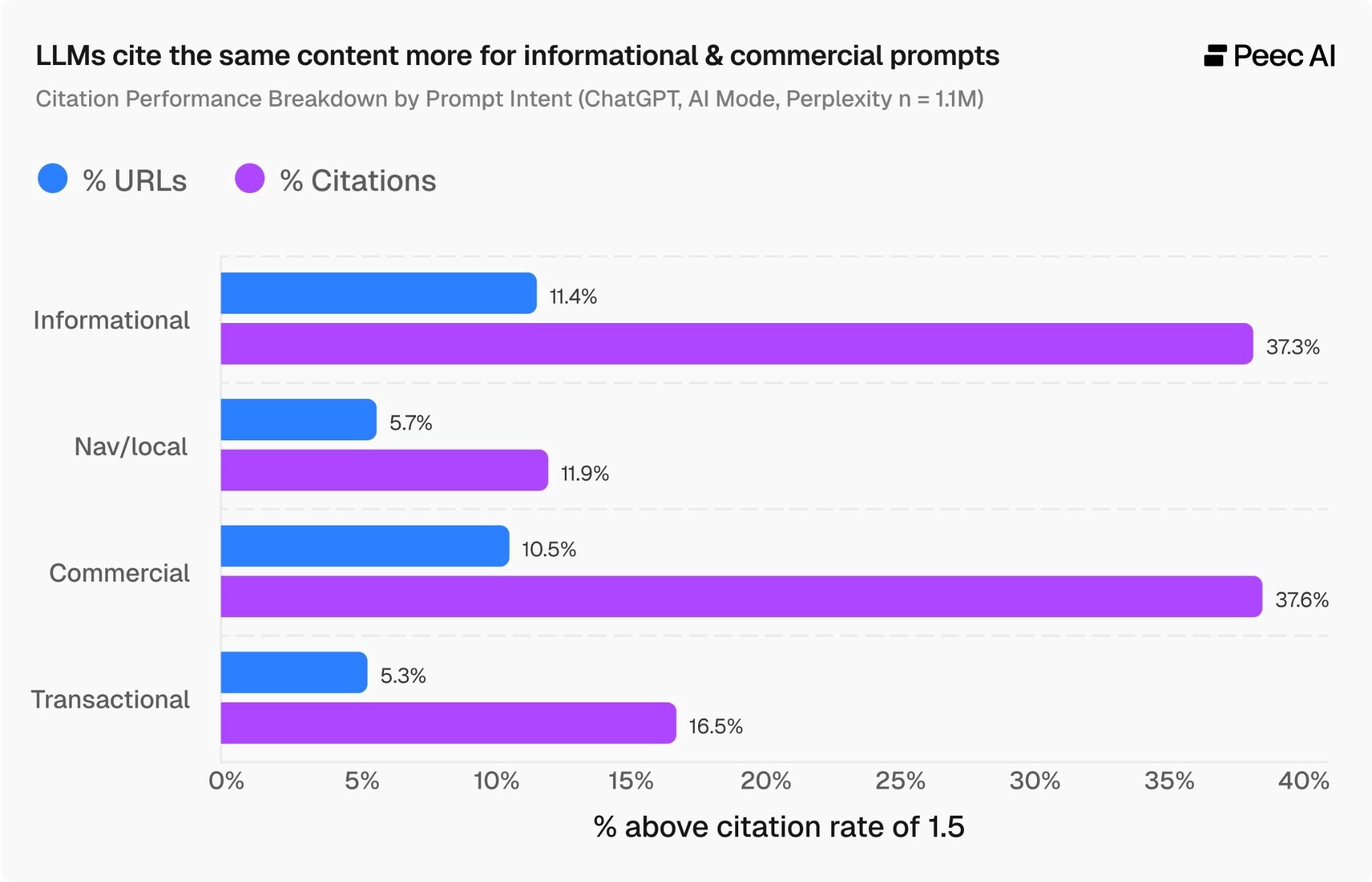

The chart below shows how many webpages make it into the top citation rate bucket of 2.0+, alongside the percentage of citations this accounts for across the whole dataset.

The two standout figures are for informational and commercial intent. Around 10–11% of URLs land in this high citation category, but they make up 37% of all citations.

For these intents - like when a user is seeking information or comparing product options - LLMs are more likely to cite the same webpage repeatedly.

But for navigational/local and transactional intents, this is not the case. This makes sense: with transactional queries, users are often exploring multiple product pages, and for navigational/local queries, multiple service provider pages.

Which content types get cited most?

We wanted to answer the question: are certain content types preferred by LLMs across all prompt intents?

To keep the analysis reliable, we filtered out any content types that made up less than 5% of the dataset. We then looked at what percentage of the remaining content types fell into the high citation rate category (2.0+ for ChatGPT and 1.5+ for AI Mode and Perplexity).

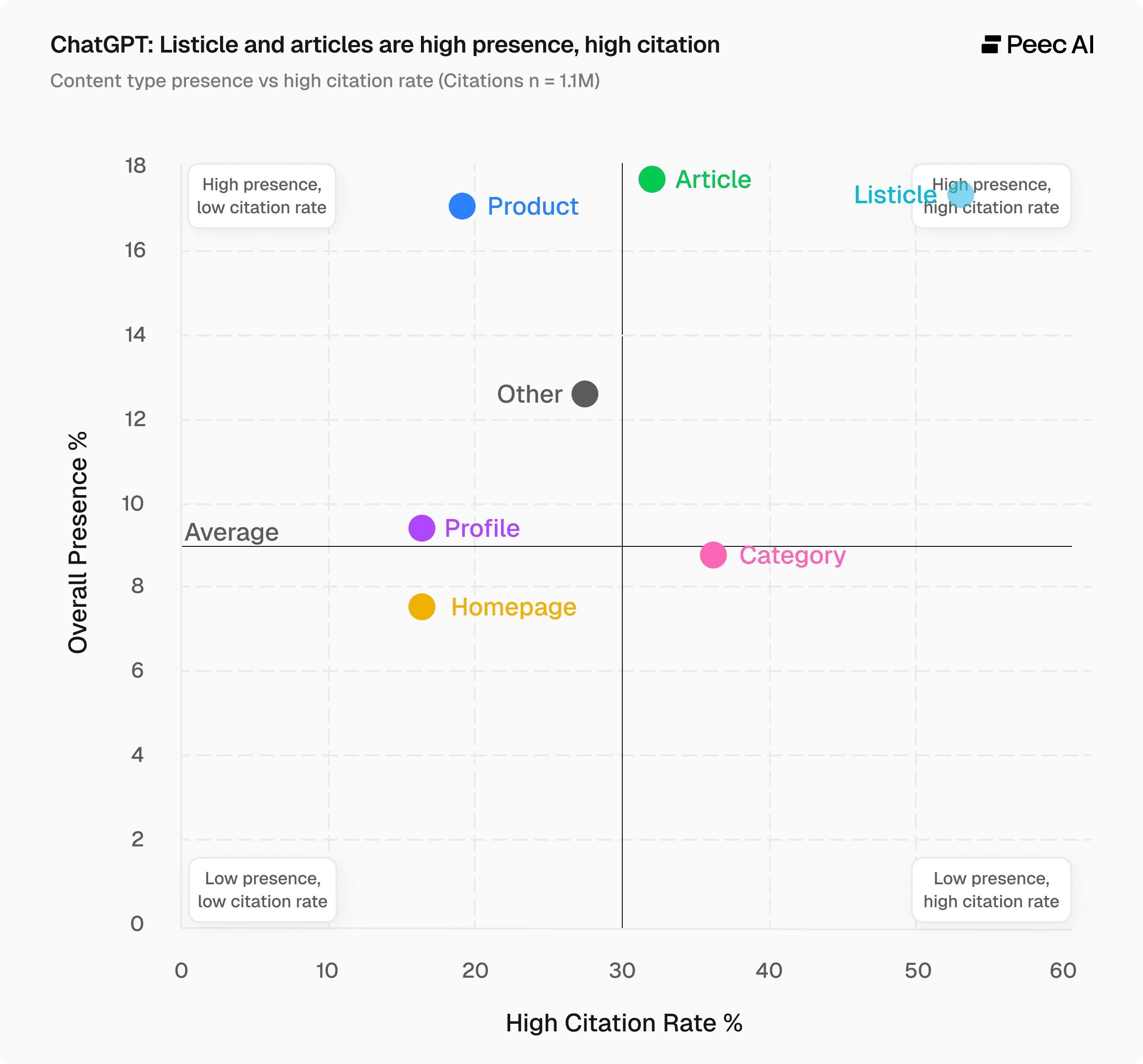

ChatGPT

For ChatGPT, we see the high citation rate on the horizontal axis and overall presence on the vertical axis.

We split the chart into four quadrants. The top-right quadrant is where you want to be. For ChatGPT, listicles make up a large share of the dataset (just under one-fifth of all URLs) but also have a very high citation rate, with 52% of all listicles being cited more than two times. Product pages, despite their high presence, don't perform as well on citations.

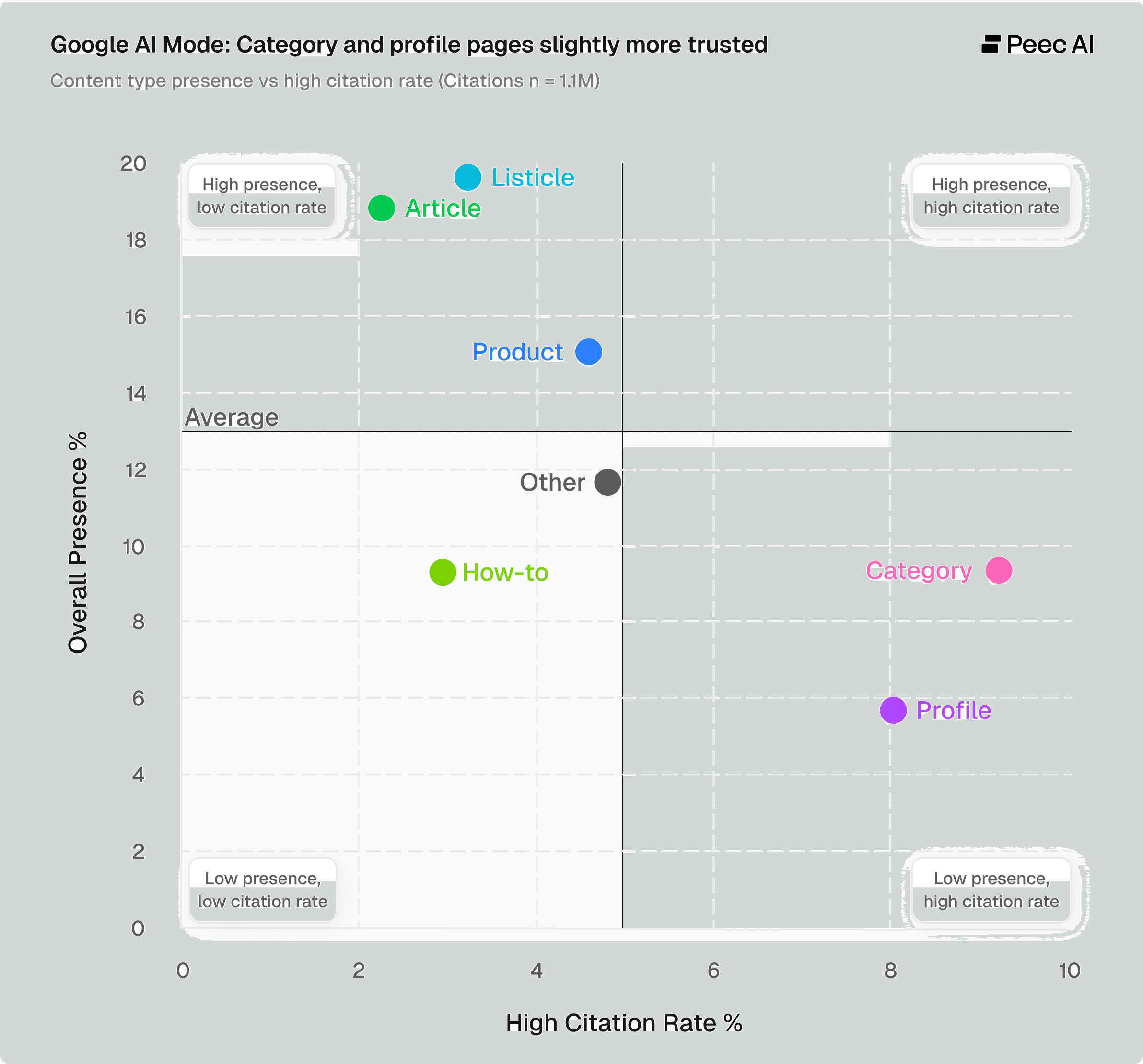

Google AI Mode

What stands out for Google AI Mode is that no content types make it into the "high presence, high citations" category, and most cluster to the left of the chart. The percentage of content pieces making it into the high citation rate category is roughly 5 times lower than ChatGPT, with category pages as the top performer at just under 10%, compared to ChatGPT listicles at 52%.

Among the most-cited pages, Google AI Mode cites category and product pages significantly more often. This shows that AI Mode tends to prefer official sources, such as brand websites and their product and category pages. (Homepages were also one of the most-cited sources but didn't meet the 5% presence cutoff in this case.)

This further shows that Google AI Mode is fairly consistent and rarely awards high citation rates. It does have a slight preference for brand-owned content, such as product or category pages from the official website. Another interpretation is that Google AI Mode may be more likely to cite different sources evenly rather than relying on one source too heavily.

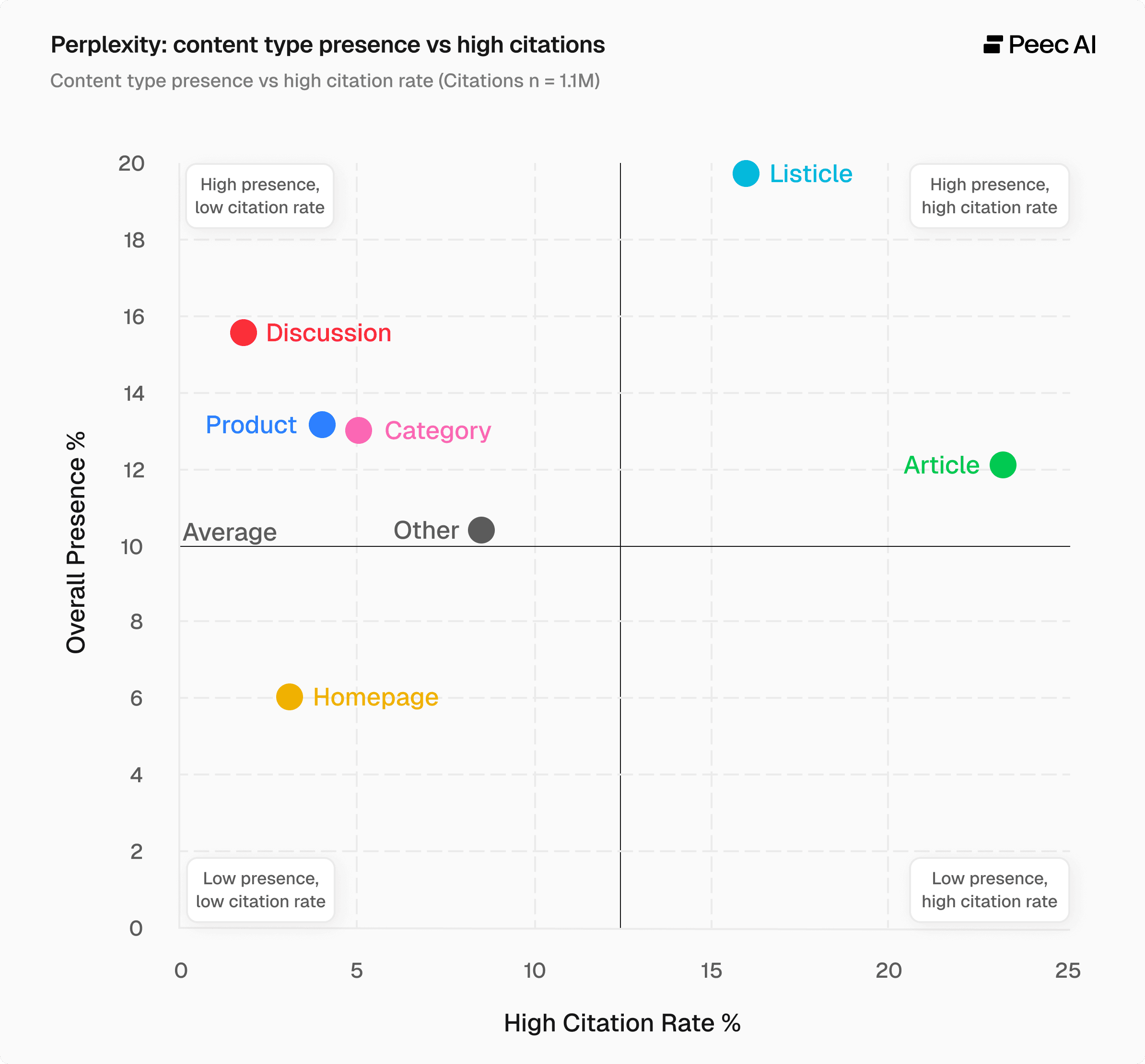

Perplexity

Finally, let's look at Perplexity. Here we also see a fairly extreme distribution, which supports our initial chart showing that just around 6% of URLs make it into the high citation rate bucket.

When we split by content type, we can see that many content types cluster to the left on the chart, meaning they are only very rarely cited often. Listicles and articles make it into the high presence, high citation category, but once again the numbers are much lower than ChatGPT.

The takeaway here is not "just do listicles." Rather, Perplexity is very selective with its top-performing content.

Summary: Benchmark by platform

The data shows that citation rates vary significantly across AI models. ChatGPT cites generously, Google AI Mode is conservative and consistent, and Perplexity concentrates its citations on a small number of sources.

Knowing where your content stands on each platform is the first step to improving your AI visibility. Use these benchmarks as a starting point, then adjust them based on your niche and the AI platforms that matter most to your audience:

ChatGPT: 2.0+

Google AI Mode: 1.1–1.5

Perplexity: 1.5–2-0

If you're not sure where your content stands across these platforms, AI search tracking tools like Peec AI give you a clear starting point.