We analyzed 232,000 citations across ChatGPT, Google AI, Perplexity, and others to answer one question: have AI platforms gotten better at filtering out self-promotional listicles over the last 12 weeks?

Read on for the results.

TL;DR

Recent studies including this piece from Seer have found that for certain niches the amount of listicles used as sources in AI search overall is dropping. But rather than looking at the listicle rate as a whole, we wanted to look at the rate of self-promotional listicles.

Using Peec AI data, we analyzed 232,000 citations across 13,000 listicles over 12 weeks (Dec 2025–Feb 2026) on ChatGPT, Google AI, Perplexity, Copilot, Gemini, and AI Overviews.

Note, all findings will always be vertical-specific. We focused on software and software reviews - a niche with a traditionally high amount of self-promotional content.

If AI platforms are going to start filtering out self-promotional content, it'll likely be where there is already high prevalence.

Key findings:

No evidence of algorithmic correction over time: the self-promo rates remain stable throughout the study period. If brands are witnessing corrections, this is likely to be on a case by case basis, rather than systemic.

~11% of citations come from self-promotional listicles where companies rank their own products.

ChatGPT has the lowest self-promo rate by far of just ~4%, compared to ~10–11% for Google AI Mode and Perplexity.

The organic search > LLM delay: it could be that Google firsts aims to correct organic traffic and the results in LLMs may take a little longer to catchup

We still don't recommend using self-promotional listicles: The reputational and algorithmic risks aren’t worth it.

Bottom line: AI search hasn't found a way to actively filter self-promotional content (yet).

What is a self-promotional listicle?

Self-promotional listicle is where a brand publishes a "best of" roundup and ranks itself as the top solution, listing competitors below. By nature, the comparison is heavily biased towards whoever is writing it.

It’s been a controversial SEO tactic for years, that controversy has carried over into AI search. SEO experts like Lily Ray have cautioned that this type of content can be punished algorithmically, and the conventional wisdom is that as AI-powered search gets smarter, it should get better at filtering out manipulative content like this.

We wanted to test that assumption with data.

Here’s an example of a self-promo listicle from cybersecurity company SentinelOne. It’s clearly positioning itself as the top solution on the brand’s own blog, whilst listing other competitors in lower positions:

The question is does this type of content server the user? And more importantly will we see any notable changes in the data?

Methodology: 13,000 listicles, 232,000 citations, 12 weeks

Using Peec AI data, we ran a fixed set of non-branded, software review prompts across six major AI search platforms from December 2025 through February 2026:

ChatGPT

Google AI Mode

Perplexity

Microsoft Copilot

Google Gemini

Google AI Overviews

Our study included:

13,000 unique listicles surfaced across all six platforms

232,000 total citations tracked

12 calendar weeks of data collection (CW49 '25 through CW08 '26)

For every calendar week, we extracted the top 1,000 performing listicles in terms of number of retrievals by each AI platform. This kept the sample size consistent week to week, since overall retrieval volumes can vary.

Detection method: We identified self-promotional content by matching domain names with brand mentions within the listicles. For example, if zapier.com published a listicle that mentioned "Zapier" as a recommended product, we flagged it as self-promotional. However, only if Zapier was promoting itself as a product within that listicle.

The Results: Self-promotion is still prevalent

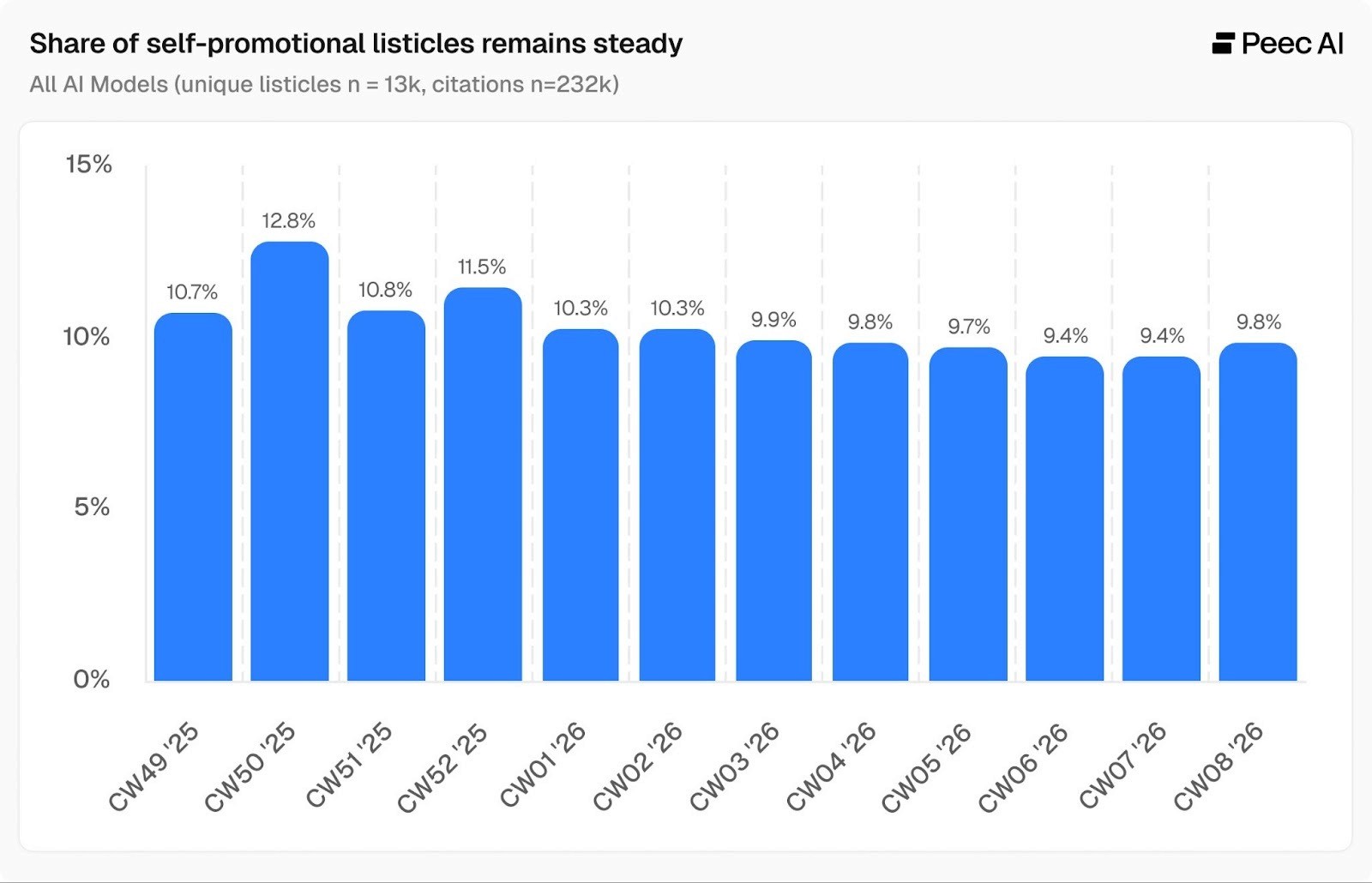

Approximately 1 in 10 citations in AI search results come from self-promotional listicles. Looking at the week-on-week average across six models below, we see that the range mainly hovers around 10%. We expect a certain amount of natural variation in the data and the findings are well within that range.

Model-specific findings

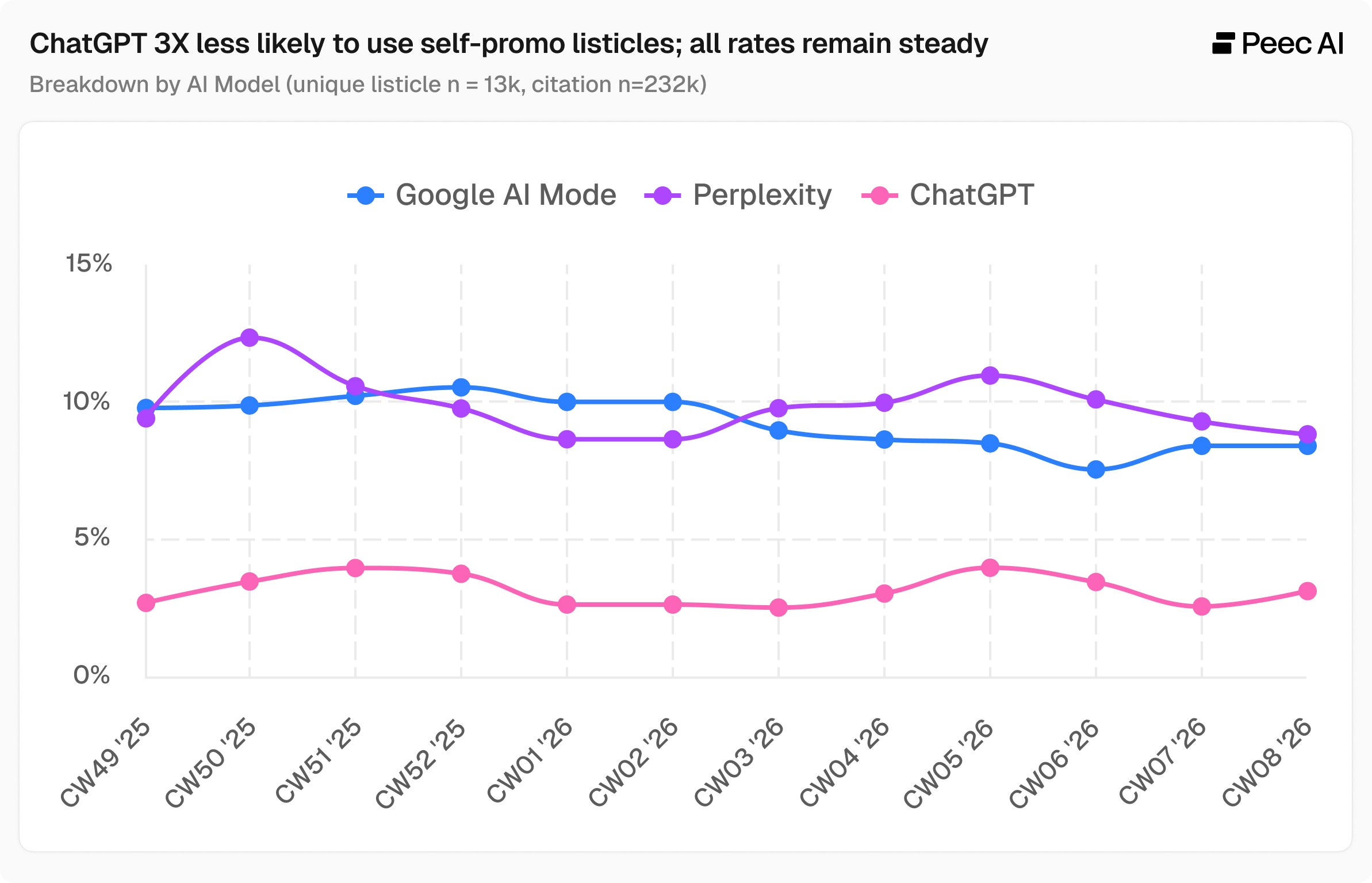

Let’s now look at three models and their self-promo listicle rates independently: ChatGPT, Google AI Mode, and Perplexity. We selected this three models in particular as they are both a diverse mix and the models believed to be most influenced by Google, as compared to models like Copilot.

1. No evidence of algorithmic correction

The data shows natural week-to-week variation but no sign that platforms are getting better at filtering self-promotional content.

December and January showed the highest rates of self-promo listicle use (11-14%)

February showed slight improvement for Google AI Mode(9-10%)

Perplexity remained consistently high (10-12%)

ChatGPT maintained low but stable rates (3-5%)

2. ChatGPT stays away from self-promo listicles 3x more than competitors

ChatGPT's self-promo listicle 3.6% average is 3x better than Google AI Mode (10.3%) and Perplexity (10.4%). This suggests OpenAI's retrieval algorithms or content filters are significantly different from competitors.

Even 3.6%, that’s still roughly 1 in 28 citations coming from a brand promoting its own product to someone trying to make a purchase decision.

Why is the rate in ChatGPT so low?

A few factors likely explain why ChatGPT sits at 3.6% while Google AI and Perplexity hover around 10-11%. ChatGPT draws from a more diverse range of sources overall, relies more heavily on educational and training sites in software reviews (7.8% vs 3.6% for Perplexity and 2.5% for Google AI Mode), and uses Zapier significantly less than other platforms (~1% of URLs vs ~8%). These are niche-specific observations, but they illustrate the kind of model-level differences that show up across prompt sets.

It's also worth noting that these findings come from a fixed set of prompts tracked consistently over time. That's intentional. Fixing the prompts is precisely what allows us to observe how citation rates change week to week, rather than attributing shifts to changes in what we asked.

What this means for AI search teams

The data shows self-promotion in listicles still “works” for now. Despite SEO experts' cautions and anecdotal evidence of Google punishing brands, self-promotional listicles are getting through AI retrieval filters and still generating citations.

However, we don’t actively recommend this type of content for several reasons:

Reputational risk: Users are becoming savvier about detecting self-serving content

Algorithmic risk: Top voices in the space have explicitly warned against this practice

Better alternatives exist: Earning placements in third-party listicles, building genuine review site relationships, and creating educational content are safer long-term strategies

The data may shift: While we see no correction yet, AI search platforms are actively working on this problem

As Lily Ray and other SEO experts have noted, self-promotional listicles can be punished algorithmically. Just because it's working today doesn't mean it will work tomorrow.

Key takeaway: AI search hasn't solved the self-promotion problem yet

After analyzing 232,000 citations across 12 weeks and 6 major AI platforms, the data shows AI search has not (yet) solved the self-promotion problem.

Rates vary by platform (ChatGPT at ~4%, others at ~10-11%), but no platform shows evidence of sustained algorithmic correction so far.

Follow us on LinkedIn for more research from the Peec AI team, shared weekly.