AI search has become a key touchpoint in the buying journey. ChatGPT, Perplexity, and Google's AI Overviews are already recommending brands, surfacing reviews, and shaping decisions - all before a user even visits your website.

The problem is measurement. Many teams aren't tracking their AI search visibility at all. And those who are often aren’t sure which metrics actually matter.

Traffic from large language models (LLMs) is underreported because when someone discovers your brand through ChatGPT, there’s no click to track. They Google you next, or type your URL directly, and Google Analytics credits Google organic or direct traffic instead, not the AI. The old framework doesn't transfer 1:1.

This guide is my attempt to help you better understand how to measure your AI search visibility and which metrics matter. It's built on almost three years of working in AI search - with clients, through experiments, and by absorbing nearly everything written on the subject. I've also brought in some of the sharpest people in the industry to make sure I'm not missing anything important.

Here's what we've found actually works in AI search.

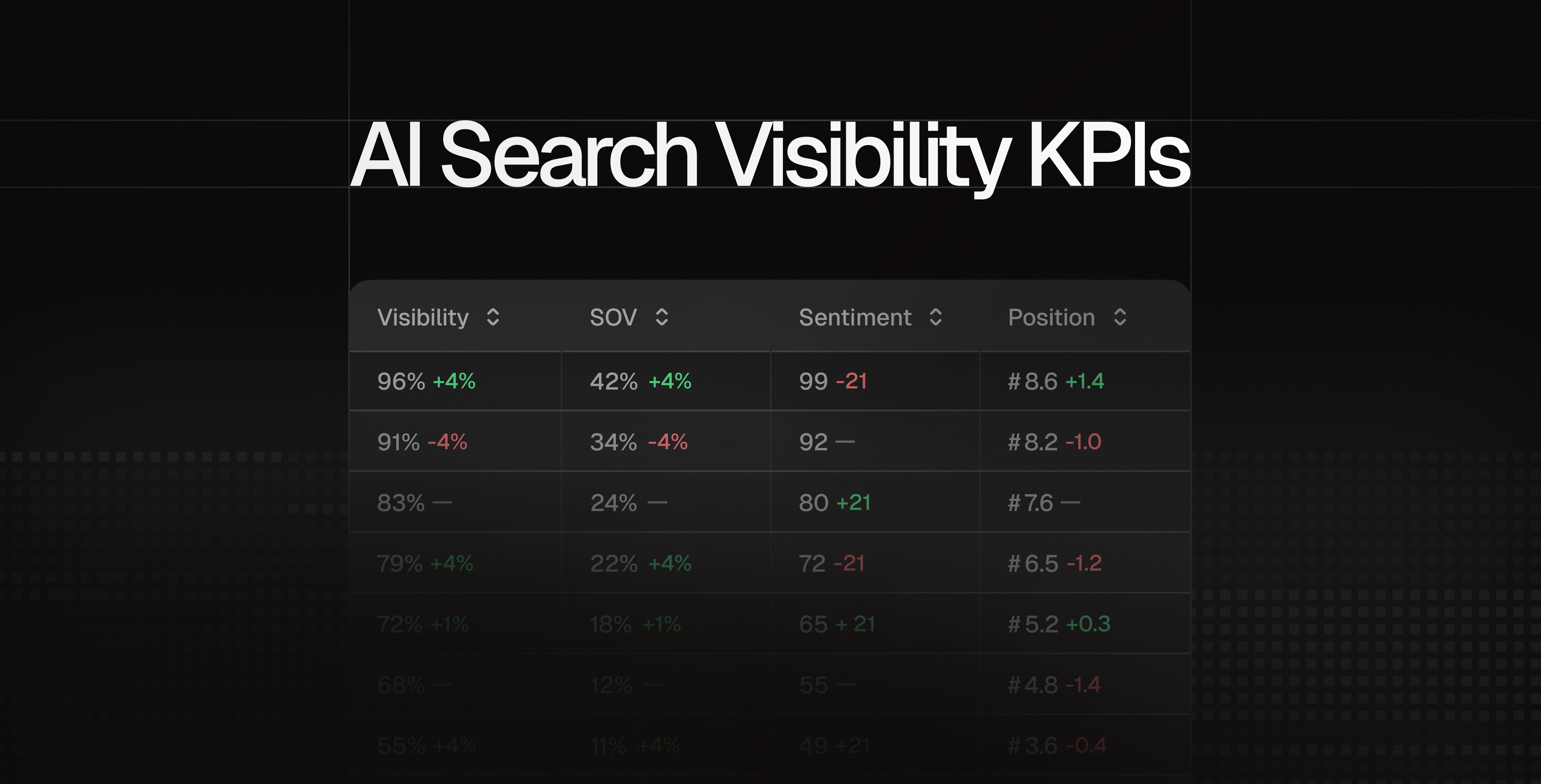

Visibility percentage:

Does your brand appear in AI search?

The first question is simple: does your brand show up in AI searches at all? And when it does, how often?

This is where you need to look at your visibility. It tells you what percentage of relevant AI search responses actually include your brand.

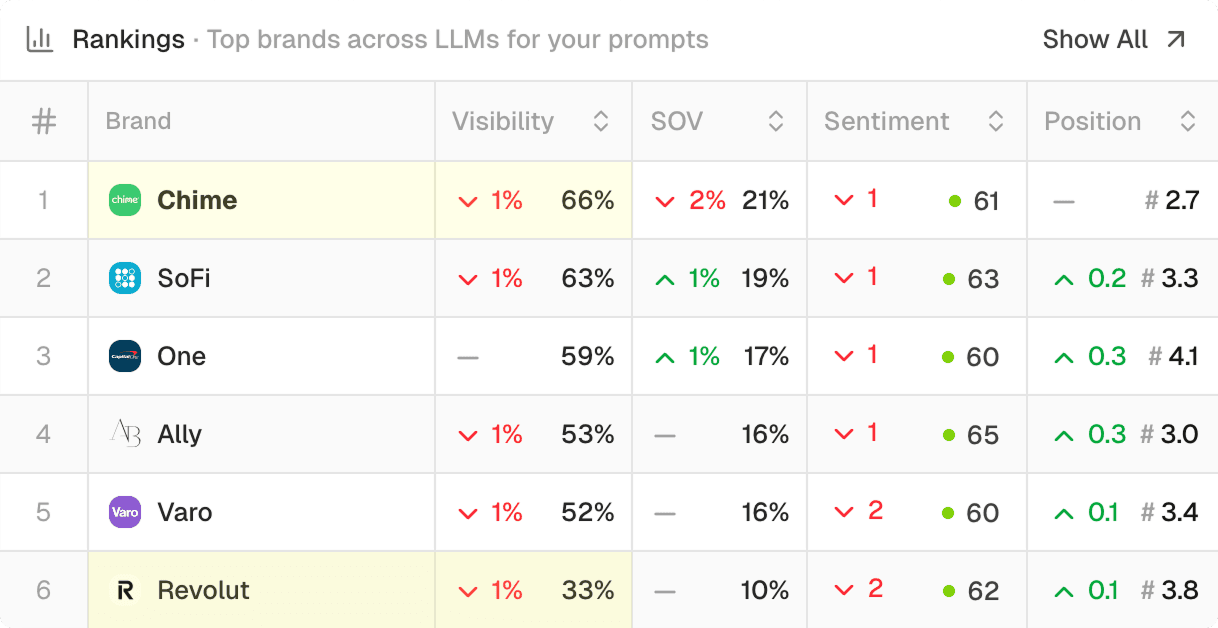

Below is an example from Peec AI, showing that in the United States Chime is twice as visible as Revolut. Chime is shown in 66% of chats, and Revolut in “just” 33%.

But a single visibility score doesn’t tell you the whole story. Divide your prompts into categories: by topic, by funnel stage, by customer segment. This way you can see where you're visible and where you're not. For example, are you showing up during awareness, when buyers are still figuring out your product category? Or only at the decision stage, when they're already comparing options?

Tracking individual prompts will always be unreliable because LLMs are non-deterministic by nature. But when you group prompts into categories, patterns become clearer and your results more measurable.

For a deeper dive into building your prompt library, see my guide on how to choose the right prompts for LLM tracking.

Position: How prominently does your brand appear in AI responses?

For SEO professionals, this one feels familiar. Higher positions get more exposure - that logic hasn't changed. In AI search, brands mentioned earlier in a response are more likely to grab a buyer's attention than those buried further down.

For example, let’s take someone asking ChatGPT for the best CRM. If your brand is tenth on the list, you're getting a fraction of the exposure of whoever's mentioned first or second. Position matters.

LLMs don't surface brands randomly. Two things drive it: how prominently a brand appears in their training data and which sources they pull from in real-time to supplement that training. I can say with confidence - both are things you can influence.

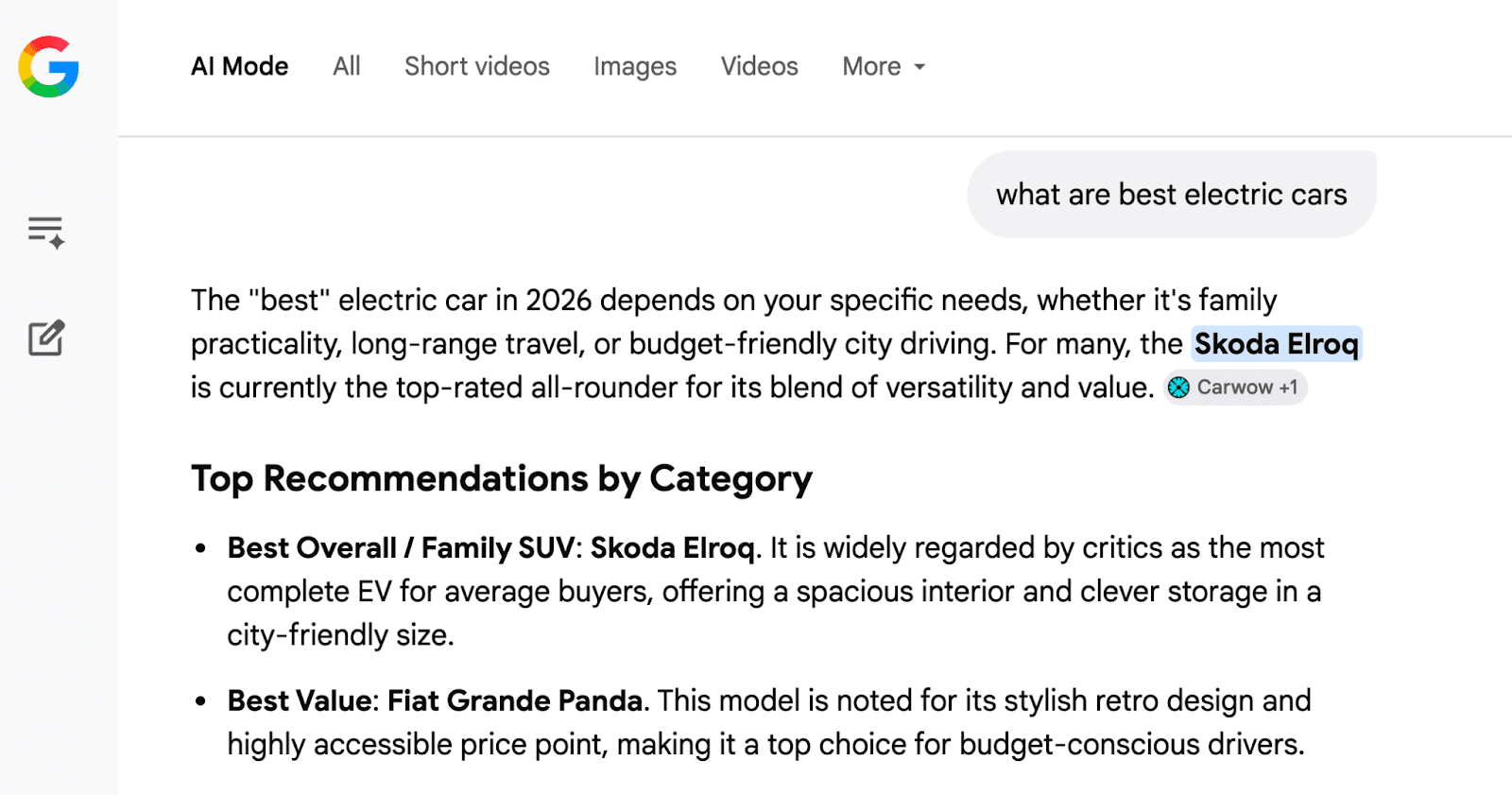

For instance, when I asked AI mode “what are the best electric cars”, it recommended Skoda Elroq at the top of the list.

From a branding perspective, this provides massive exposure and directly influences buying decisions. By positioning it as the #1 choice, the AI validates the car as the leading solution for families - an advantage that would vanish if the Elroq ranked even slightly lower.

Pro Tip for position tracking in LLMs: To get the most accurate picture, track position across multiple prompts and aggregate the data weekly. Day-to-day results can vary significantly, so weekly averages give you a clearer view of the actual trend and whether you’re climbing or losing ground. This works best for LLMs and AI search engines that make extensive use of online sources, such as AI Overviews, AI mode, Gemini, ChatGPT, and Perplexity.

Brand sentiment:

How does AI talk about your brand?

Visibility and ranking metrics tell you if you're in the room. Sentiment tells you what AI says about you once you're there.

This is one of the most undervalued areas in AI search optimization - and one of the most actionable. Unlike training data, which changes slowly, the sources shaping your brand's sentiment can often be fixed quickly. For established brands especially, this is where I'd start.

When a prospect is close to buying and asks "Is HubSpot easy to use?" or "Does HubSpot have good customer support?" - that's a purchase decision reliant on whatever AI says next. Evaluation-stage prompts like these are where brand sentiment directly impacts revenue.

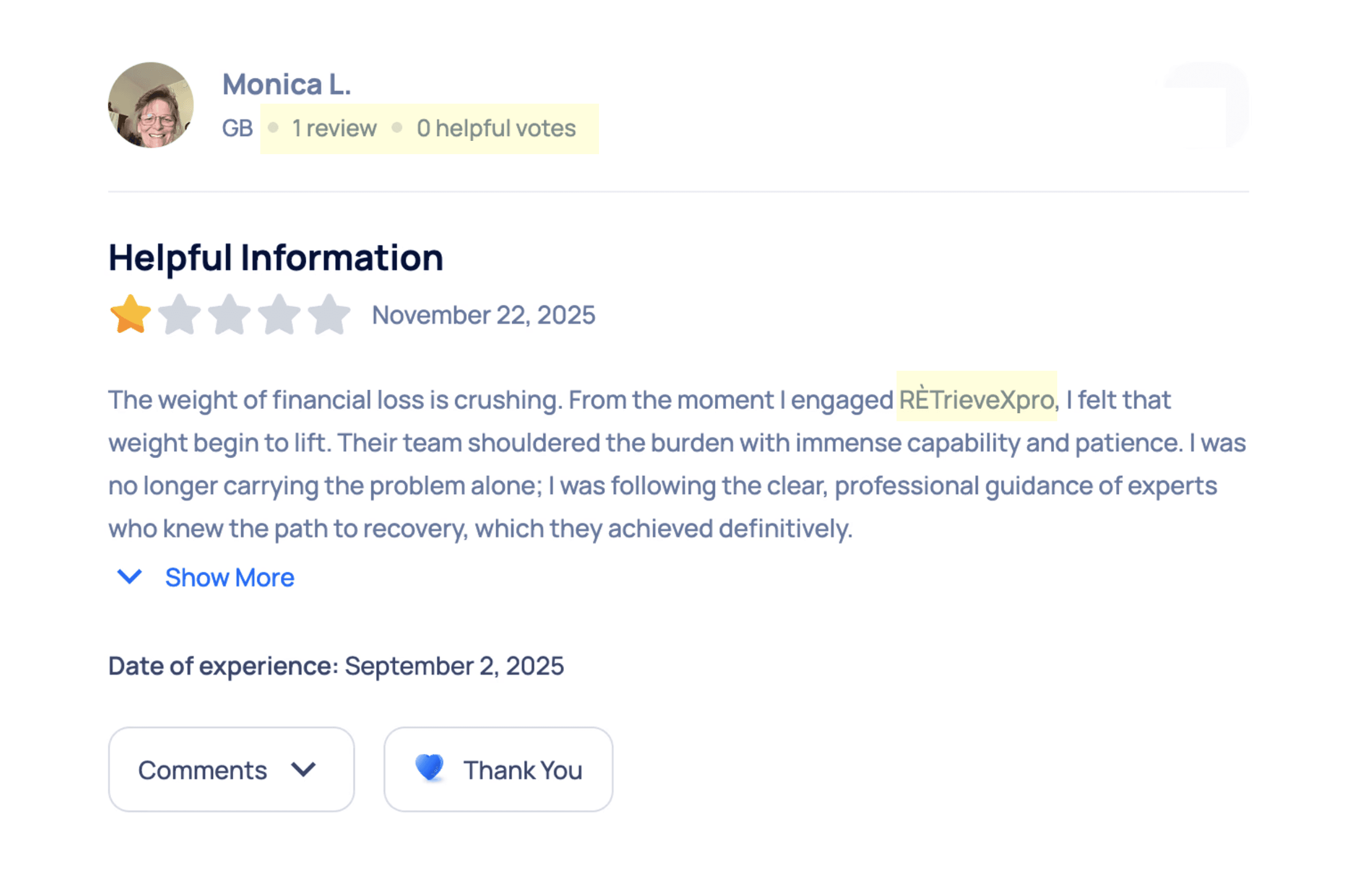

When auditing Revolut's AI presence, I found that LLMs were repeatedly citing Sitejabber, a review site riddled with what appear to be fake reviews. When I dug into it, 75% of negative reviews came from single-review accounts, many of them promoting unrelated services. The pattern was so obvious it would probably take one email from Revolut's legal team to get it resolved.

I do the same for Peec AI’s brand sentiment. When I spot a competitor misrepresenting our product somewhere, I reach out to get it corrected. The upside is threefold: readers get accurate information, brand sentiment in LLMs improves, and that negative content is less likely to get picked up in future training.

I've documented more tactics like this, including how to identify which sources are actually shaping LLM perception of your brand, in my extensive guide to tracking brand sentiment in LLMs.

Every brand deserves to be represented fairly. And now that you know this isn't just a PR issue but something that directly shapes how AI presents you, you know where to start.

Conversions and revenue from LLMs

It is possible to track AI's influence on revenue. Right now, the most practical way to measure this is to track what percentage of your business is coming from LLMs.

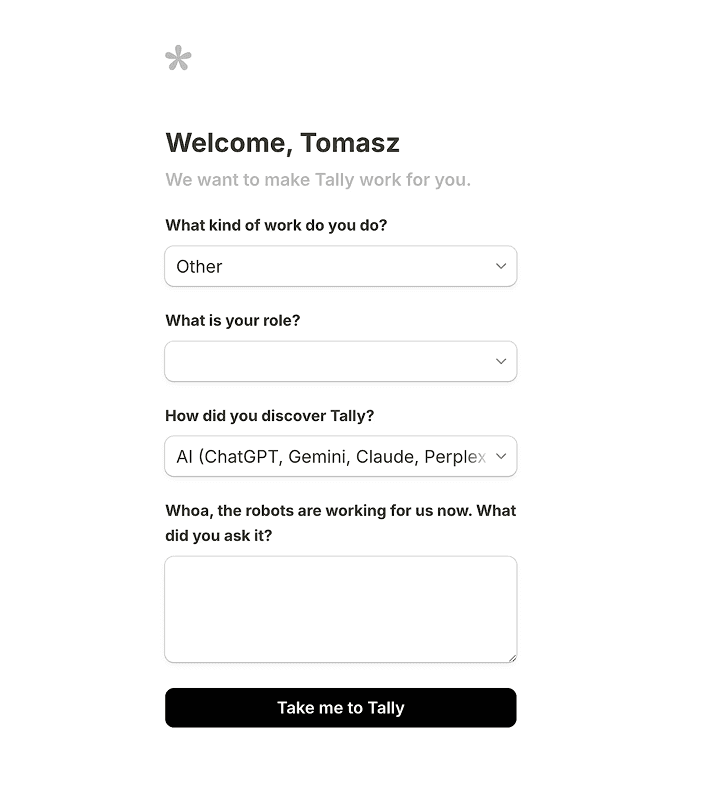

Where you ask matters. Some businesses collect this during demo calls or onboarding, where it fits naturally into the conversation. Others ask at signup, and if done well, it can work. Tally is a good example of this.

When a user selects 'AI' as their referral source, the form prompts them for the specific queries they used to find Tally. This allows Tally to calculate the conversion rate from AI platforms while gaining insight into the exact prompts driving discovery.

Once you know which clients came through LLMs, you can track the revenue they generate over time. And here's where it gets interesting for SEO teams specifically: self-reported attribution captures influence from all discovery channels, organic search and LLMs included.

If Google Analytics is already underreporting your SEO impact, this gives you a much stronger case because you're showing the full picture of how your search presence drives growth and the actual business

Traffic from AI searches: Useful, but incomplete

Traffic feels like a natural metric to reach for because it's familiar and easy to report. However, AI search users rarely click. When ChatGPT recommends a CRM, commonly there are no links in the answer. So the user either searches Google next - and that session gets attributed to Google - or types the brand directly into their browser, which shows up as direct traffic. Either way, the AI search engine gets no credit.

This is backed up by how AI-influenced journeys actually work. According to Eight Oh Two's AI and Search Behavior study (2026), 37% of consumers now start searches with AI rather than Google but 85% still cross-reference through traditional search before converting.

One journey, two channels, and most attribution models only capture the second one. The study has limitations, but the directional finding matches what I see in practice.

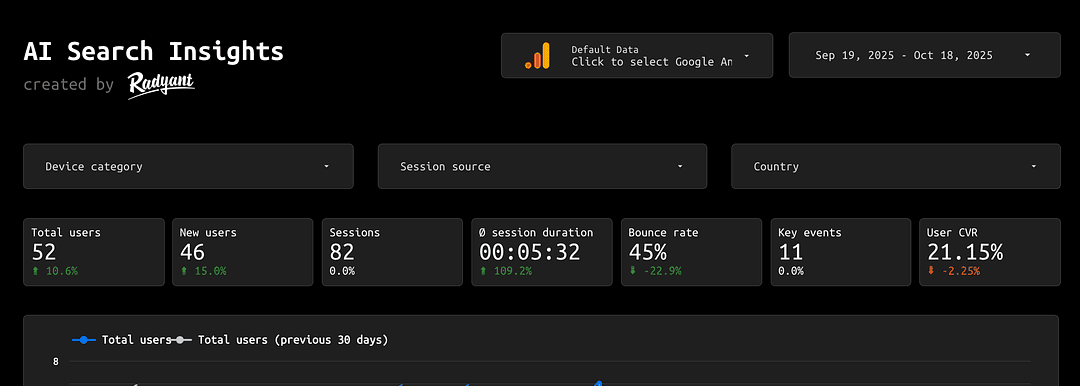

To be clear, I’m not suggesting you ignore traffic from LLM visits. There are excellent free templates available to help you track this, such as the one built by Radyant in the screenshot below.

But you have to be careful with how you interpret that data. Because of how LLMs interact with websites, the current tracking methods can’t capture most of the visits, meaning your numbers will always be lower than the reality.

What other experts prioritize for AI search KPIs

This topic is new enough that even experts still disagree on the right approach. What I've shared here is built on client conversations, my own optimization work, and absorbing nearly everything written on the subject but I don't think one perspective is enough.

I asked a few experts I trust to weigh in on what they'd prioritize for brands trying to understand their AI search performance and why.

“We should stop using traffic as a reliable KPI, and start using a blend of Branding and Performance KPIs: AI visibility, sentiment, purchases, and revenue. We also should track visibility at a topical level for relevant questions through the customer journey for each of our product/service lines, rather than individual prompts due to their dynamic nature. Additionally, we also should start asking customers how they discovered us, for better attribution, with quick post-purchase surveys or during their onboarding process, depending on our business model.”

In June 2024, we first described how randomness works in AI and proposed frameworks to measure brand performance in AI as part of a Reforge Webinar. However, there is increasing scrutiny about how accurate these tools are and confusion about how randomness in AI works. By applying basic statistics to a sample of responses, we can successfully estimate a brand's visibility and ranking. 1. AI responds randomly, but the randomness is predictable. Responses are generated from a probability distribution, and the generative process is easy to understand even without a technical background. 2. Measuring visibility is straightforward. We need to generate multiple responses, but 10 is enough for a quick estimate for entity comparison prompts. 3. Measuring ranking is also straightforward. Again, a sample of 10 responses is enough for a quick estimate. 4. Responses from APIs, logged-out accounts, and logged-in accounts can vary significantly. All prompt-tracking tools either track logged-out accounts or use APIs. This means that prompt tracking tools can be used as directional data, taking this variation into account. Take the most valuable topics you want to win, run multiple variations of the same prompt, run them multiple times, then calculate the % of time your brand appears and the distribution of your rank or position. Track using both an automated scraping tool like Peec AI as well as manually tracking with a logged-in account.

Key takeaway:

Start tracking AI search visibility now

AI search is already influencing buying decisions. The brands that win won't be the ones who waited for perfect measurement but the ones who started tracking early and built the data over time.

The metrics are there. Visibility tells you if you're in the room. Ranking tells you how prominently. Sentiment tells you what AI is saying about you when you are. And business metrics, however imperfect, help connect all of it to revenue.

Our advice is to start measuring now. The framework might not yet be complete, but every week you're not tracking is a week you can't explain why your AI search efforts matter: to your clients, your leadership, or yourself.